Welcome

Database Management System

Course: COMP 163

Time: 08:00 AM - 09:15 AM on Monday, Wednesday, Friday

Location: John T Chambers Technology Center 114 (CTC 114)

Module 1

Introduction to Database Management Systems

Agenda

- Start Module 1

- Review Topics

- Review Syllabus

What is a Database?

- A database is an organized place to store data

- Built for large amounts of data

- Built to keep data consistent over time

- Data is stored so it can be found again later

Database: Store → Find

Database as Storage

- A database is an organized place to store data

- Data is structured

- Storage is intentional, not ad hoc

Flower

------

id: 101

name: "Rose"

color: "Red"

price: 2.50

Large Amounts of Data

- Databases handle large volumes of data

- Much more than what fits in memory

- Designed to grow over time

Flowers

-------

101 | Rose | Red | 2.50

102 | Tulip | Yellow | 1.75

103 | Lily | White | 3.00

104 | Daisy | White | 1.25

105 | Orchid | Purple | 4.50

...

(Thousands of rows)

Consistency Over Time

- Databases keep data consistent

- Rules prevent invalid entries

- Same data seen by all users

Rule:

price >= 0

✔ Rose | 2.50

✔ Tulip | 1.75

✘ Lily | -3.00 ← rejected

Finding Data Again

- Data is stored so it can be found again later

- Queries let us ask questions

- Results are predictable and repeatable

SELECT name, price

FROM flowers

WHERE color = 'White';

Result:

Lily | 3.00

Daisy | 1.25

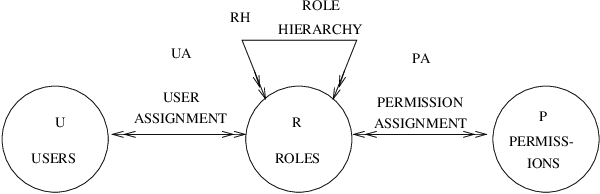

Why a Database Management System (DBMS)?

What is a DBMS?

- A DBMS is software that manages a database

- It sits between applications and stored data

- Applications do not access data files directly

App / Website / API / Software

↓

--------- DBMS ---------

↓

Database (disk / SSD)

Databases Before DBMS

| System | How it Stores Data | How you Query | Main Problem |

|---|---|---|---|

| Paper | Handwritten records | Search by reading | Slow, hard to scale |

| Files (folders) | Documents in directories | Manual naming + search | Duplicates, inconsistent versions |

| Spreadsheets | Rows and columns | Filters and formulas | Hard for multi user + rules |

| Custom App (ad hoc) | Program specific format | Whatever the app supports | No standard, hard to maintain |

Problem: Duplication → Inconsistency

Before 1900: Manual Systems

- Before 1440: Manual Writing: Handwritten records

- 1440: Printing Press: Faster copying

- 1800s: Shipping Letters: ~10–14 days by ship

Edgar F. Codd

- Born: August 19, 1923

- Known for: Relational Database Model

- Key Contribution: Relational Algebra, Normal Forms

- Impact: Foundation of SQL and modern databases

- Award: ACM Turing Award (1981)

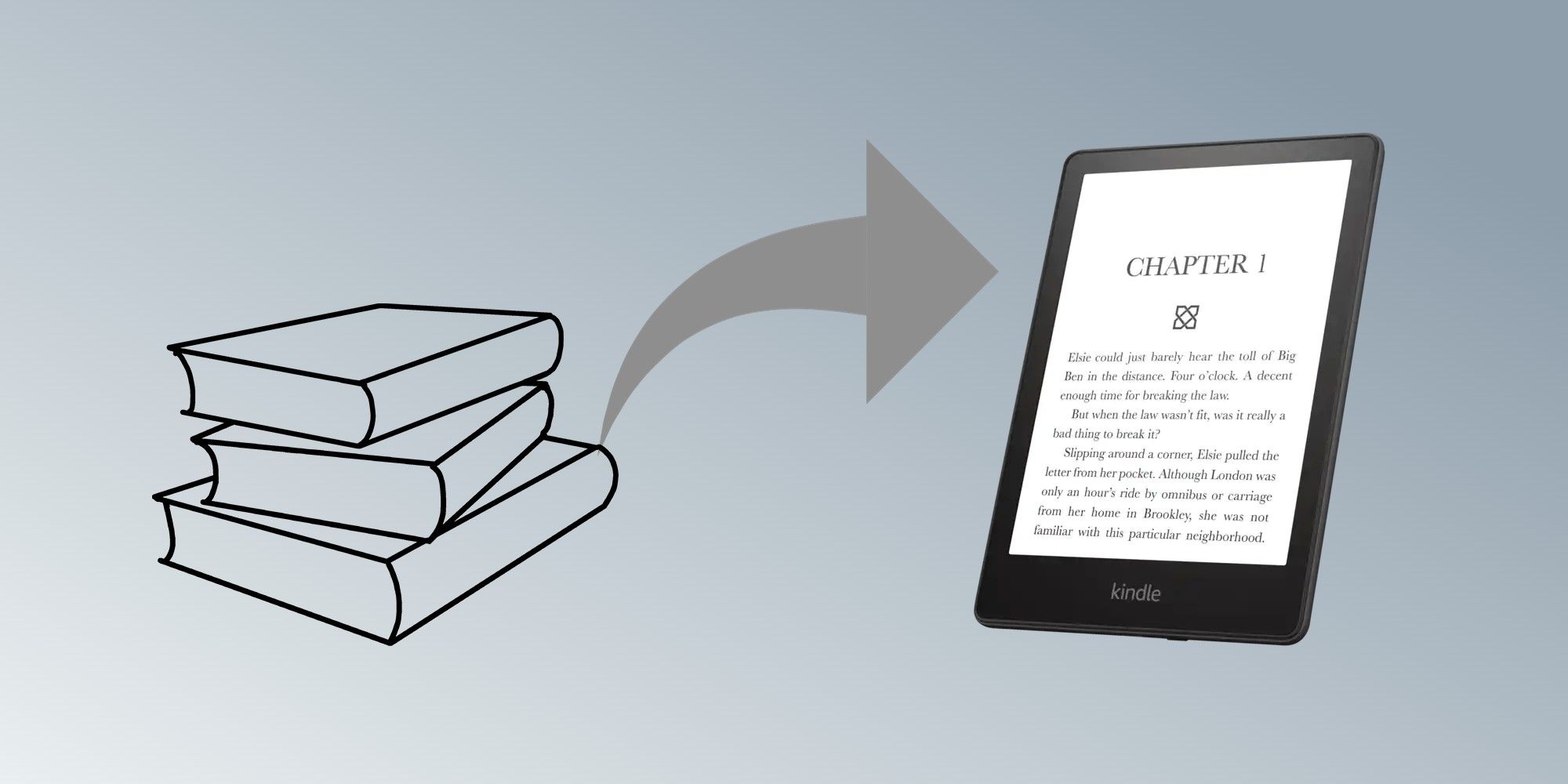

20th Century: The Digital Revolution

- 1970: Relational Database

- 1980s: SQL: Standard query language

- 1990s: Client Server: Multi user databases

- 2000s: Digitalization: Paper to databases

21st Century: Connectivity and Real-Time Revolution

- Cloud: Scalable remote databases

- Big Data: Massive data processing

- AI: Smarter data analysis

- Edge: Low latency storage

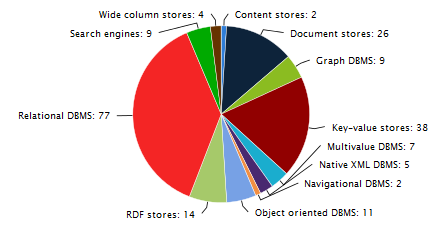

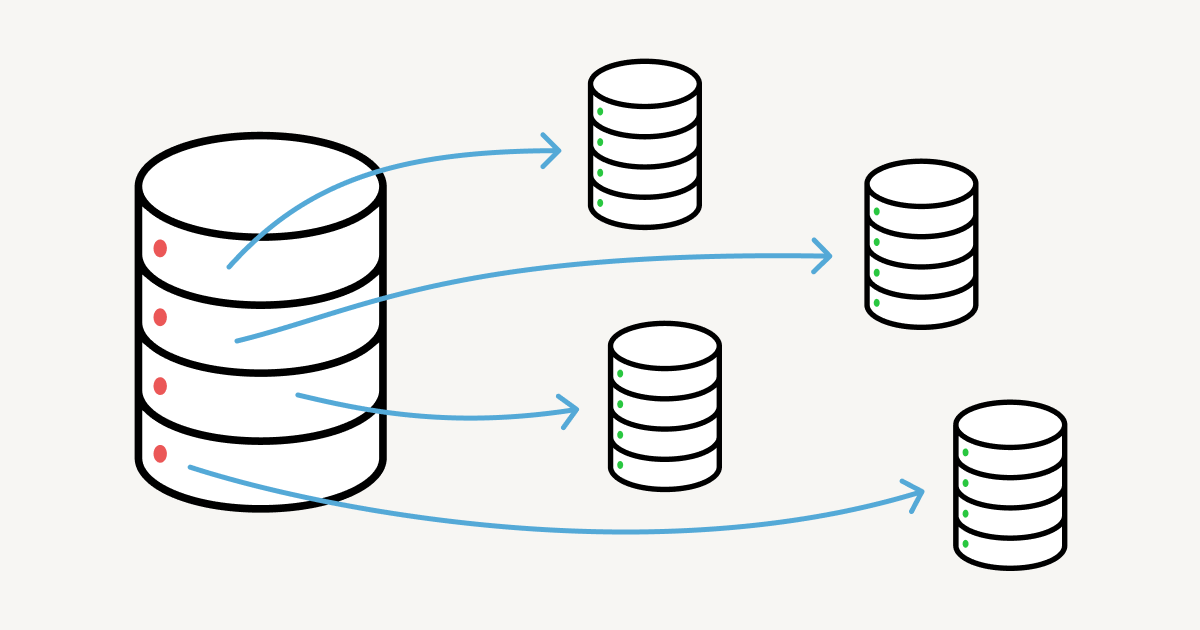

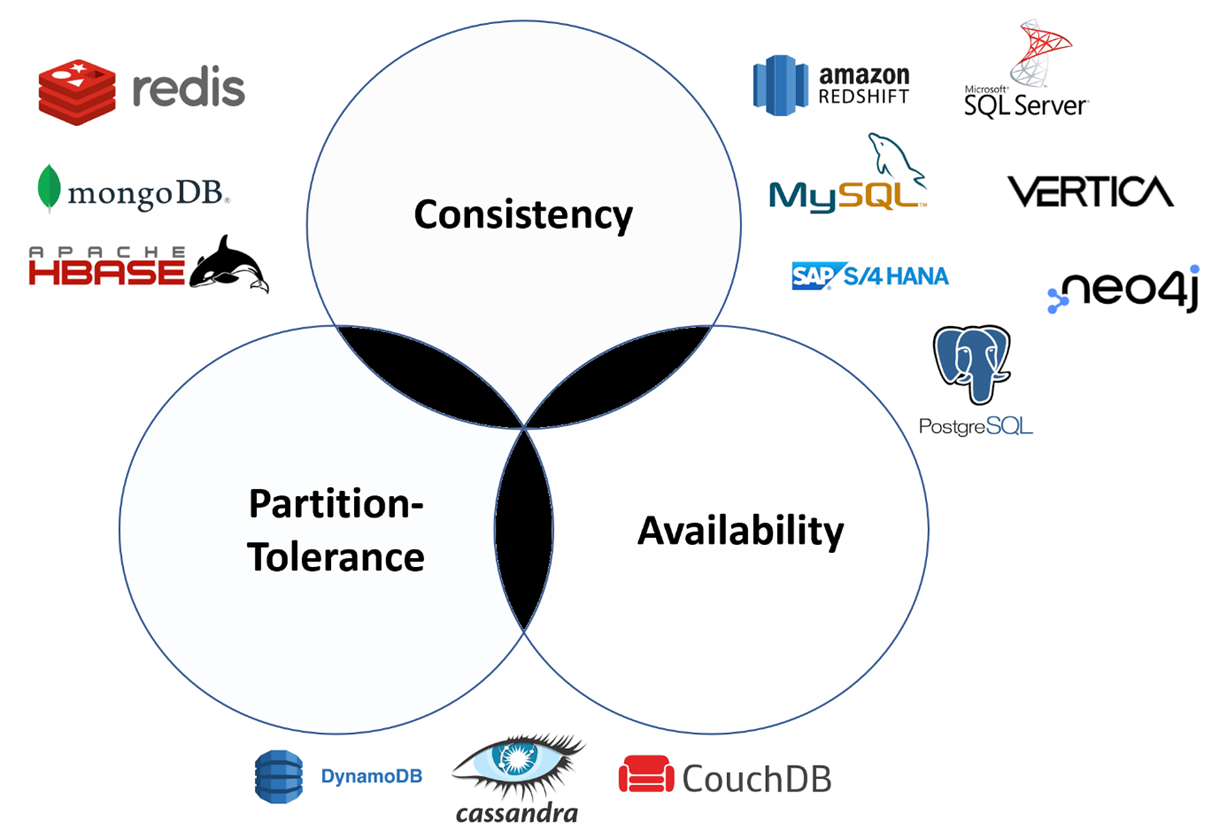

Types of Databases

| Type | Data Shape | Good For | Example |

|---|---|---|---|

| Relational | Tables (rows/columns) | Business data, consistency | PostgreSQL, MySQL |

| Document | JSON like documents | Flexible fields, web apps | MongoDB |

| Key Value | Key → value | Caching, sessions | Redis |

| Column Store | Columns (analytics) | Fast aggregates | ClickHouse |

| Graph | Nodes + edges | Relationships, networks | Neo4j |

- Relational is our main focus

- Others exist for different data and workloads

- Same goal: store and query data

Choose by Data Shape

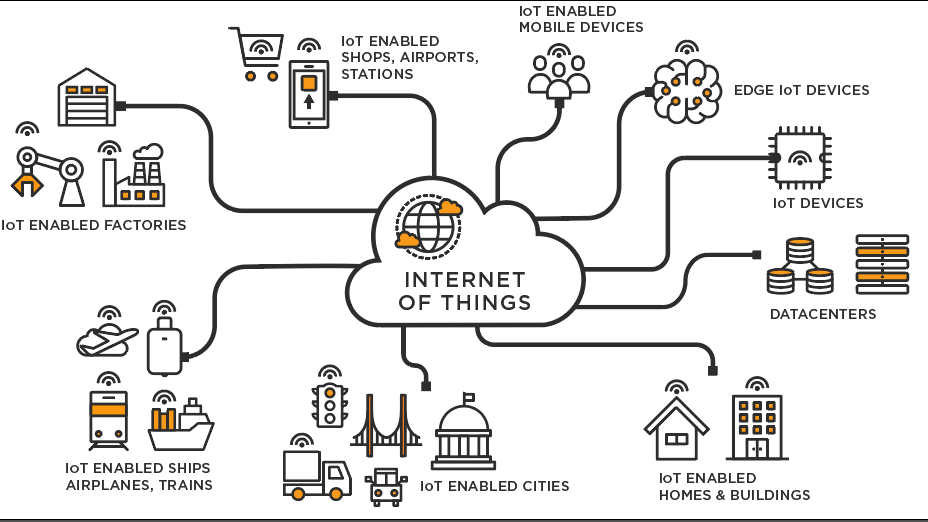

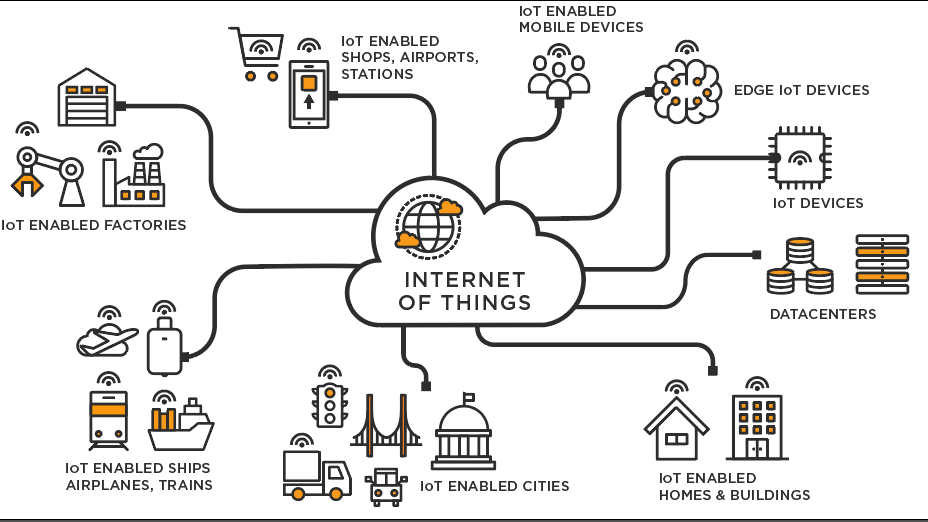

Why a Database Management System 🤔

- Many devices produce data continuously

- Many users need shared access

- Many apps depend on the same source of truth

- Apps read and write data

- APIs connect services

- DBMS keeps data consistent

- Transactions ensure correct updates

- Concurrency control supports many users at once

- Constraints prevent invalid data

- Indexes improve query performance

- Security rules control who can access what

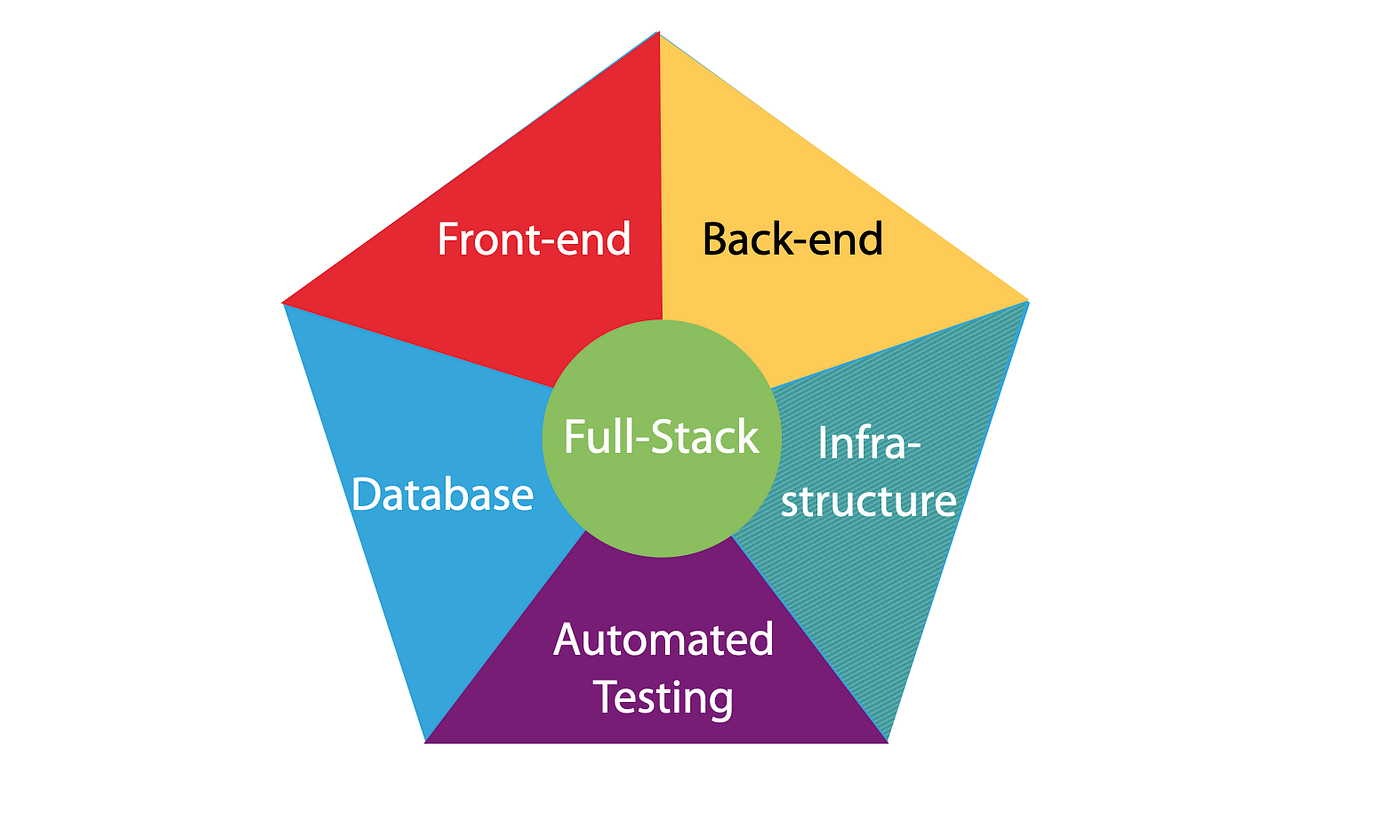

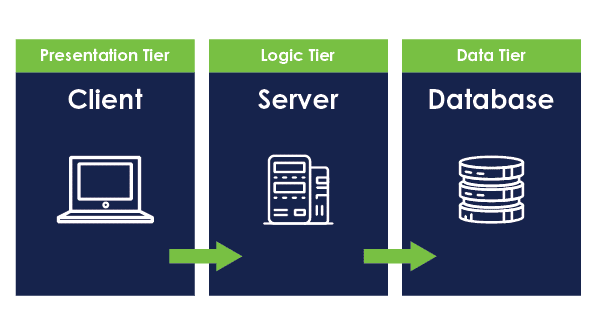

Three Core Components of a Software System

Each component has a distinct role, but all three must work together.

LLMs and SQL: Human Review Still Required

- LLMs can generate SQL, results are not always correct

- Generated queries may:

- use invalid syntax

- reference the wrong tables or columns

- misinterpret the intent of the question

- Incorrect SQL can:

- return misleading results

- update the wrong rows

- delete data permanently

-- Generated by an LLM

DELETE FROM orders;

-- Missing WHERE clause

-- Result: all rows deleted

Conclusion: LLMs can assist, but humans must review SQL before execution.

What Skill Changes

- Software evolves quickly

- Frameworks change every few years

- Languages rise and fall

- Tools are frequently replaced

- Hardware evolves rapidly

- Faster CPUs and storage

- New architectures and platforms

- Short upgrade cycles

- Data lasts much longer than software

- Databases persist across generations of technology

- Applications are rewritten, but data remains

- Good database design protects long-term value

Why a Relational Database Management System?

- Tables match how we organize records

- SQL gives a standard interface

- Constraints protect correctness

- Transactions protect consistency

Relational DBMS: Core Pieces

Relational Model: The Idea

- Entity → a table

- Row → a record

- Key → identity + links

flowers(id, name, color, price)

orders(id, flowerId, qty)

| StudentId | Name | Major |

|---|---|---|

| 101 | Ana | CS |

| 102 | Ben | EE |

| 103 | Cam | CS |

Keys Connect Tables

Agenda

- Review Module 1 (DBMS foundations)

- CREATE: database and table structure

- SQLite dialect and file based model

- SQLite data types and type affinity

- Flower domain schema design

- Preview: installation and coding

Question

SELECT name, price

FROM flowers

WHERE price > 2.00;

Can this query be used in programs written in Java, C++, and Python?

- No, SQL queries are specific to Java

- Each program requires a different query syntax

- Yes, but only if the database is SQLite

- Yes, SQL is programming language

Answer (click)

Show answer

Correct: D

SQL is a database query language. The same SQL query can be sent from Java, C++, Python, or any other language through a database API.

Java

Connection conn = DriverManager.getConnection(url);

Statement stmt = conn.createStatement();

ResultSet rs = stmt.executeQuery("""

SELECT name, price

FROM flowers

WHERE price > 2.00

""");

Python

conn = sqlite3.connect("flowers.db")

cur = conn.cursor()

cur.execute("""

SELECT name, price

FROM flowers

WHERE price > 2.00

""")

C++

sqlite3* db;

sqlite3_open("flowers.db", &db);

sqlite3_exec(db,

"SELECT name, price FROM flowers WHERE price > 2.00",

nullptr, nullptr, nullptr);

JavaScript

const db = new sqlite3.Database("flowers.db");

db.all(

`SELECT name, price

FROM flowers

WHERE price > 2.00`, callback);

Question

Which component is executing the SQL query?

- The programming language runtime

- The operating system

- The database managment system

- The text editor used to write the query

Answer (click)

Show answer

Correct: C

The DBMS parses, plans, and executes SQL. Programming languages only send the query and receive results.

PROGRAM

Java · Python · C++ · JS

send SQL string

DBMS

parse

plan

execute

DATABASE

flowers.db

tables

rows

indexes

Question

What is the role of a programming language when working with a database?

- To replace SQL entirely

- To store data directly on disk

- To send SQL commands to the DBMS

- To define how tables are physically stored

Answer (click)

Show answer

Correct: C

Applications use programming languages to communicate with the DBMS, but SQL remains the interface for data access.

Imperative

# step-by-step instructions

# how to do it

for flower in flowers:

if flower.price > 2.00:

print(flower.name, flower.price)

- Tell the computer how

- Explicit steps

- Order matters

Declarative

-- describe the result

-- what you want

SELECT name, price

FROM flowers

WHERE price > 2.00;

- Tell the DBMS what

- DBMS decides how

- SQL is descriptive

Query A:

SELECT * FROM flowers;

Query B:

INSERT INTO flowers(name, price)

VALUES ('Rose', 2.50);

- Does the order of these queries matter?

- Would the result be the same if we swap them?

Show answer

- Yes, sometimes order matters

- If queries depend on earlier changes

- Read-only operations are not affected by order

- SQL is descriptive, but execution order still matters

From Concepts to Structure

- So far: what databases and DBMSs are

- Now: how structure is defined

- Focus today is CREATE, not querying

What Does CREATE Mean?

- CREATE defines structure

- No data is stored yet

- It defines tables, columns, and rules

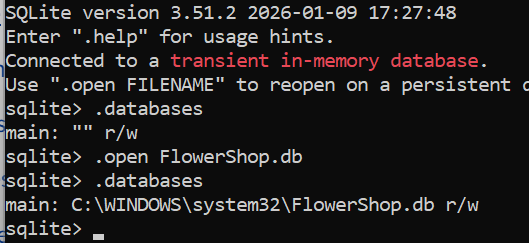

Database vs Table (SQLite)

- Database: one file (

.db) - Table: structure inside the file

- SQLite has no server process

flowers.db

├─ flowers

├─ suppliers

└─ orders

PROGRAM

Java · Python · C++ · JS

send SQL string

DBMS

parse

plan

execute

DATABASE

flowers.db

tables

rows

indexes

CLI

sqlite3 flowers.db

enter SQL

SQLite as a SQL Dialect

- SQLite follows standard SQL closely

- Some syntax differences exist

- Most important difference: typing model

SQLite Type System

- SQLite uses dynamic typing

- Columns have type affinity

- Values decide their type at runtime

Five Core SQLite Data Types

| Type | Description | Flower Example |

|---|---|---|

| INTEGER | Whole numbers | flower_id |

| REAL | Decimal numbers | price |

| TEXT | Strings | name, color |

| BLOB | Binary data | image |

| NULL | Missing value | unknown_color |

Flower Domain

- Simple and familiar example

- Focus on structure, not complexity

- Used consistently across examples

Flower Table: Logical Design

flowers(

id,

name,

color,

price

)

Choosing SQLite Data Types

| Column | Type | Reason |

|---|---|---|

| id | INTEGER | Primary identifier |

| name | TEXT | Flower name |

| color | TEXT | Readable attribute |

| price | REAL | Decimal value |

CREATE TABLE (Preview)

CREATE TABLE flowers (

id INTEGER PRIMARY KEY,

name TEXT,

color TEXT,

price REAL

);

Constraints Still Matter

- Flexible typing does not mean no rules

- Constraints protect data quality

price REAL CHECK (price >= 0)

What We Are Not Doing Yet

- No INSERT

- No SELECT

- Structure comes first

Next: Installing SQLite

- sqlite3 command line tool

- Verify installation

- Create first database file

Then: Hands On SQL

- Create tables

- Insert flower data

- Run simple queries

Summary

- CREATE defines structure

- SQLite uses type affinity

- Good design still matters

Agenda

- Concept: Database and Schema

- SQLite data types

- Designing a flower database

- Preparing for installation and coding

What Does CREATE Mean?

- CREATE defines structure, not data

- It tells the DBMS what tables look like

- No rows yet, only rules

Database vs Table

- Database: container (file in SQLite)

- Table: structured collection of rows

- SQLite database = single

.dbfile

flowers.db

├─ flowers

├─ suppliers

└─ orders

SQLite Is File Based

- No server to start

- No username or password by default

- Database is a file you can copy

SQLite Dialect of SQL

- SQLite supports most standard SQL

- Some differences from MySQL / PostgreSQL

- Flexible typing (important!)

SQLite Type System

- SQLite uses dynamic typing

- Columns have an affinity, not a strict type

- Values decide their type at runtime

Five Core SQLite Data Types

| Type | Description | Flower Example |

|---|---|---|

| INTEGER | Whole numbers | flower_id |

| REAL | Decimal numbers | price |

| TEXT | Strings | name, color |

| BLOB | Binary data | image |

| NULL | Missing value | unknown_color |

Type Affinity in SQLite

- INTEGER, TEXT, REAL are preferred

- SQLite does not strictly enforce types

- Design discipline matters

Flower Domain

- We model a simple flower shop

- Flowers, prices, colors, stock

- Simple and familiar domain

Flower Table: First Design

flowers(

id,

name,

color,

price

)

Choosing Data Types

| Column | Type | Reason |

|---|---|---|

| id | INTEGER | Unique identifier |

| name | TEXT | Flower name |

| color | TEXT | Human readable |

| price | REAL | Decimal value |

CREATE TABLE (Preview)

CREATE TABLE flowers (

id INTEGER PRIMARY KEY,

name TEXT,

color TEXT,

price REAL

);

Primary Key in SQLite

INTEGER PRIMARY KEYis special- Aliases the internal rowid

- Auto increments by default

Constraints Matter

- Even with flexible typing

- Rules protect data quality

price REAL CHECK (price >= 0)

What We Are NOT Doing Yet

- No inserts

- No queries

- Focus is structure

Next: Installing SQLite

- sqlite3 command line tool

- Verify installation

- Create first database file

Then: Hands On Coding

- Create tables

- Insert flower data

- Run simple queries

Summary

- CREATE defines structure

- SQLite uses flexible typing

- Good design still matters

Case Study: Furniture Store Inventory (SQLite)

- Goal: Draft each query

- After each step: run a SELECT to verify

- Then: new requirement → ALTER TABLE → verify again

Step 1: Create the Table

- Create a table named

inventory - Columns:

idINTEGER PRIMARY KEYnameTEXT NOT NULLcategoryTEXT NOT NULLpriceREAL NOT NULLquantityINTEGER NOT NULL

Show answer

CREATE TABLE inventory (

id INTEGER PRIMARY KEY,

name TEXT NOT NULL,

category TEXT NOT NULL,

price REAL NOT NULL,

quantity INTEGER NOT NULL

);

Verify 1

- Verify the table exists and columns are correct

- Use a SQLite CLI command (not SQL)

Show answer

.schema inventory

Step 2: Insert Data

- Insert three rows:

- Oak Table, Table, 299.99, 4

- Leather Sofa, Sofa, 899.00, 2

- Desk Lamp, Lamp, 39.50, 12

- Write three INSERT statements

Show answer

INSERT INTO inventory (name, category, price, quantity)

VALUES ('Oak Table', 'Table', 299.99, 4);

INSERT INTO inventory (name, category, price, quantity)

VALUES ('Leather Sofa', 'Sofa', 899.00, 2);

INSERT INTO inventory (name, category, price, quantity)

VALUES ('Desk Lamp', 'Lamp', 39.50, 12);

Verify 2

- Read the inventory table

- Show: id, name, category, price, quantity

Show answer

SELECT id, name, category, price, quantity

FROM inventory;

Business Question

- List items with low stock

- Definition: quantity <= 3

- Return: name, quantity

Show answer

SELECT name, quantity

FROM inventory

WHERE quantity <= 3;

New Requirement

- The store now needs to track the supplier for each item

- Add a new column:

supplier(TEXT) - Then fill it for existing rows

Step 3: ALTER TABLE

- Add a new column

suppliertoinventory - Type: TEXT

Show answer

ALTER TABLE inventory

ADD COLUMN supplier TEXT;

Verify 3

- Verify the new column exists

- Use a SQLite CLI command

Show answer

.schema inventory

Step 4: Update Existing Rows

- Set supplier values:

- Oak Table → Pacific Furnishings

- Leather Sofa → West Coast Leather

- Desk Lamp → BrightHome

- Write three UPDATE statements

Show answer

UPDATE inventory

SET supplier = 'Pacific Furnishings'

WHERE name = 'Oak Table';

UPDATE inventory

SET supplier = 'West Coast Leather'

WHERE name = 'Leather Sofa';

UPDATE inventory

SET supplier = 'BrightHome'

WHERE name = 'Desk Lamp';

Verify 4

- Read and confirm supplier values

- Return: id, name, supplier, quantity

Show answer

SELECT id, name, supplier, quantity

FROM inventory;

Final Check

- List all items supplied by BrightHome

- Return: name, category, quantity

Show answer

SELECT name, category, quantity

FROM inventory

WHERE supplier = 'BrightHome';

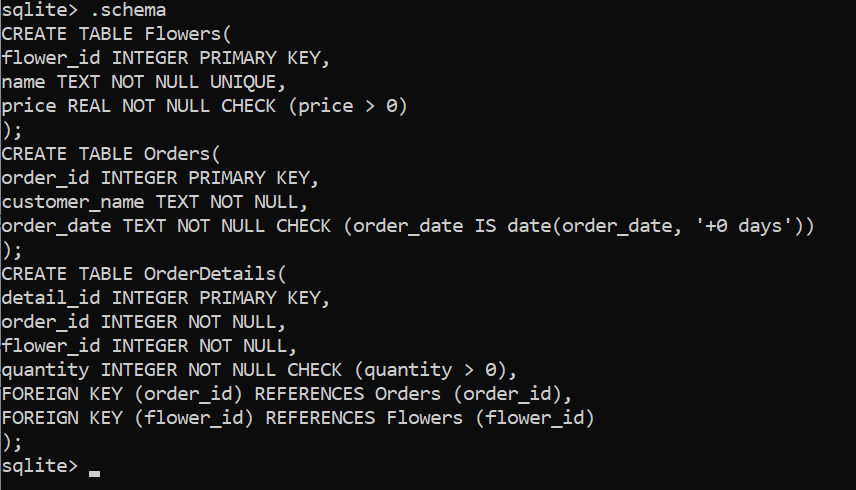

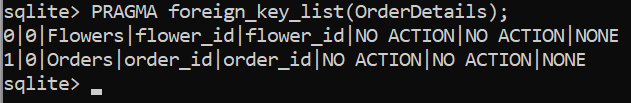

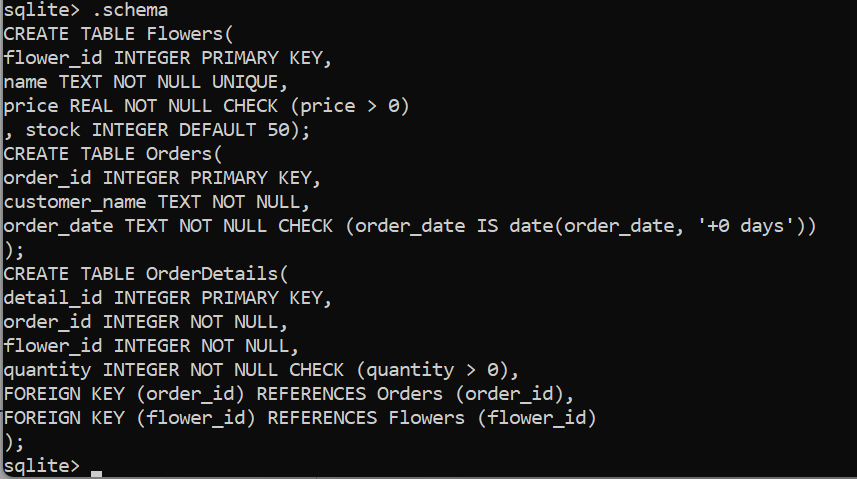

Agenda

- Primary key

- Constraints:

UNIQUE,NOT NULL,DEFAULT - Relationships: foreign keys

- Lab 1 overview

- Homework 1 overview

Primary Key

First the concept, then the SQL (using our Furniture Store case study).

Definition (No Meaning)

- A primary key is a column (or columns) to identify rows

- It is an identifier only (no semantic meaning required)

- It exists so rows can be referenced precisely

inventory

---------

id | name | category | price | quantity

1 | Oak Table | Table | 299.99 | 4

2 | Leather Sofa | Sofa | 899.00 | 2

3 | Desk Lamp | Lamp | 39.50 | 12

Row Identity

What a Primary Key Enforces

- Uniqueness (no duplicates)

- Stability (used for references)

- One row ↔ one key value

Good:

id = 1, 2, 3, 4, ...

Bad:

id = 1, 1, 2, 3 (duplicate)

Why We Need It

- Target exactly one row

- Avoid accidental multi-row updates

- Enable relationships later (foreign keys)

Without a key:

UPDATE inventory

SET price = 10

WHERE category = 'Lamp';

Could affect many rows.

Primary Key in Our Case Study

- Table:

inventory - Primary key:

id - Chosen as a simple numeric identifier

id is not "meaningful".

It is just an identifier.

name/category can change

id should not.

SQL: Defining the Primary Key

CREATE TABLE inventory (

id INTEGER PRIMARY KEY,

name TEXT NOT NULL,

category TEXT NOT NULL,

price REAL NOT NULL,

quantity INTEGER NOT NULL

);

SQLite: Auto-Generated IDs

INTEGER PRIMARY KEYcan be auto-generated- You can omit

idduring INSERT - SQLite assigns a new integer value

INSERT INTO inventory (name, category, price, quantity)

VALUES ('Oak Table', 'Table', 299.99, 4);

-- id assigned automatically

AUTOINCREMENT (When to Use)

- For stricter "never reuse old ids" behavior

- Prevents reusing a deleted id later

- Usually not needed, but good to know

id INTEGER PRIMARY KEY AUTOINCREMENT

SQL: Primary Key with AUTOINCREMENT

CREATE TABLE inventory (

id INTEGER PRIMARY KEY AUTOINCREMENT,

name TEXT NOT NULL,

category TEXT NOT NULL,

price REAL NOT NULL,

quantity INTEGER NOT NULL

);

SQLite will keep increasing id values even if rows are deleted.

ID Generation Concept

Verify the ID Was Assigned

- Always run a SELECT after inserts

- Confirm rows and IDs match expectations

SELECT id, name, category, price, quantity

FROM inventory;

Safe Updates Use the Key

- Primary key targets one row

- Reduces risk of mass updates

- More reliable than matching on names

UPDATE inventory

SET price = 279.99

WHERE id = 1;

Safe Deletes Use the Key

- Primary key targets one row

- Prevents deleting multiple matches

- Use with care

DELETE FROM inventory

WHERE id = 1;

Key Takeaway

Primary keys exist for correctness and control.

Constraints

Rules enforced by the database to protect data quality.

Common Constraints

- NOT NULL: value must exist

- UNIQUE: no duplicates allowed

- DEFAULT: value assigned automatically

price REAL NOT NULL

sku TEXT UNIQUE

quantity INTEGER DEFAULT 0

NOT NULL

Prevents missing critical information.

- Column must always have a value

- INSERT fails if value is missing

- Useful for required attributes

name TEXT NOT NULL,

category TEXT NOT NULL,

price REAL NOT NULL

UNIQUE

Prevents duplicate values across rows.

- Ensures values do not repeat

- Often used for identifiers

- Different from primary key

sku TEXT UNIQUE

DEFAULT

Provides a value when none is supplied.

- Reduces required input

- Prevents NULL values

- Encodes common assumptions

quantity INTEGER DEFAULT 0

Updated Inventory Table

CREATE TABLE inventory (

id INTEGER PRIMARY KEY AUTOINCREMENT,

name TEXT NOT NULL,

category TEXT NOT NULL,

sku TEXT UNIQUE,

price REAL NOT NULL,

quantity INTEGER DEFAULT 0

);

Why Constraints Matter

- Catch errors early

- Protect data integrity

- Reduce application complexity

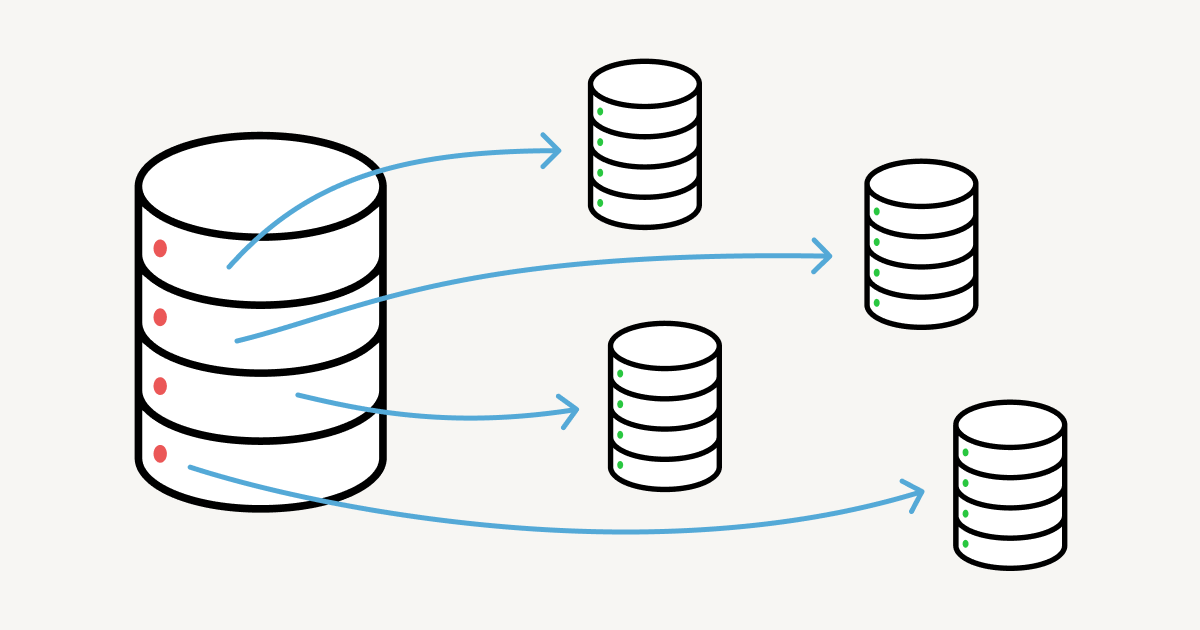

Relationships Between Tables

Real systems connect data across tables.

Inventory and Suppliers

Foreign Key Example: Referencing Values

Supplier Table (Parent)

| id (PK) | name | country |

|---|---|---|

| 1 | Pacific Furnishings | USA |

| 2 | West Coast Leather | USA |

| 3 | BrightHome | Canada |

Inventory Table (Child)

| id (PK) | name | category | supplier_id (FK) |

|---|---|---|---|

| 101 | Oak Table | Table | 1 |

| 102 | Leather Sofa | Sofa | 2 |

| 103 | Desk Lamp | Lamp | 3 |

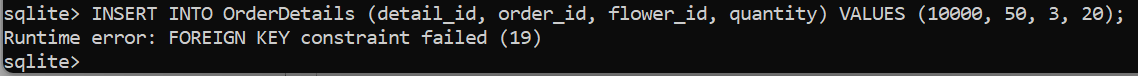

Example: Supplier and Inventory Relationship

Each inventory item references exactly one supplier using a foreign key.

name = "Pacific Furnishings"

references supplier.id

name = "Oak Table"

supplier_id = 1

The database enforces that supplier_id = 1 must exist in the

supplier table before the inventory row can be inserted.

Foreign Key

A reference to a primary key in another table.

- Links rows across tables

- Preserves consistency

- Prevents invalid references

supplier_id INTEGER

REFERENCES supplier(id)

Create Supplier Table

CREATE TABLE supplier (

id INTEGER PRIMARY KEY AUTOINCREMENT,

name TEXT NOT NULL,

contact_email TEXT UNIQUE

);

Link Inventory to Supplier

CREATE TABLE inventory (

id INTEGER PRIMARY KEY AUTOINCREMENT,

name TEXT NOT NULL,

category TEXT NOT NULL,

price REAL NOT NULL,

quantity INTEGER DEFAULT 0,

supplier_id INTEGER,

FOREIGN KEY (supplier_id)

REFERENCES supplier(id)

);

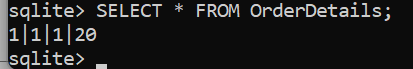

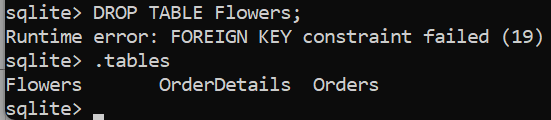

What the Foreign Key Enforces

- Supplier must exist before assignment

- No orphan records

- Clear ownership relationships

Key Relationships Summary

Takeaway

Constraints and keys are not optional. They are the foundation of reliable, maintainable databases.

Case Study Extension

The furniture store now wants to manage suppliers separately.

New Requirement

- Each item is supplied by exactly one supplier

- A supplier can provide many inventory items

- Supplier information must be stored only once

New Entity: Supplier

- Track supplier identity and contact information

- Enforce data quality using constraints

- Prepare for relationships with inventory

Task 1: Design the Supplier Table

- Table name:

supplier - At least 3 attributes

- Include a primary key

- Use NOT NULL and UNIQUE where appropriate

Think about what uniquely identifies a supplier and what information should never be missing.

Suggested Attributes

id: unique supplier identifiername: supplier nameemail: contact emailphone: optional contact number

Task 2: Create the Supplier Table

- Use

INTEGER PRIMARY KEY AUTOINCREMENT - Ensure

nameis NOT NULL - Ensure

emailis UNIQUE

Show example

CREATE TABLE supplier (

id INTEGER PRIMARY KEY AUTOINCREMENT,

name TEXT NOT NULL,

email TEXT UNIQUE NOT NULL,

phone TEXT

);

Verify the Supplier Table

- Use a SQLite shell command

- Confirm columns and constraints

.schema supplier

Relationship Requirement

- Each inventory item references one supplier

- This is a one-to-many relationship

- Implemented using a foreign key

Inventory–Supplier Relationship

Task 3: Add Supplier Reference

- Add

supplier_idto inventory - Use a foreign key constraint

- Reference

supplier(id)

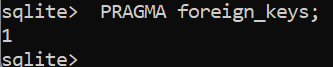

In SQLite, foreign keys must be enabled explicitly.

SQLite Foreign Key Reminder

PRAGMA foreign_keys = ON;

Run this before creating or using foreign key constraints.

Updated Inventory Table (With Supplier)

CREATE TABLE inventory (

id INTEGER PRIMARY KEY AUTOINCREMENT,

name TEXT NOT NULL,

category TEXT NOT NULL,

price REAL NOT NULL,

quantity INTEGER DEFAULT 0,

supplier_id INTEGER NOT NULL,

FOREIGN KEY (supplier_id)

REFERENCES supplier(id)

);

What This Prevents

- Assigning non-existent suppliers

- Duplicate supplier data

- Inconsistent relationships

Checkpoint

You now have: entities, primary keys, constraints, and a foreign key relationship enforced by SQLite.

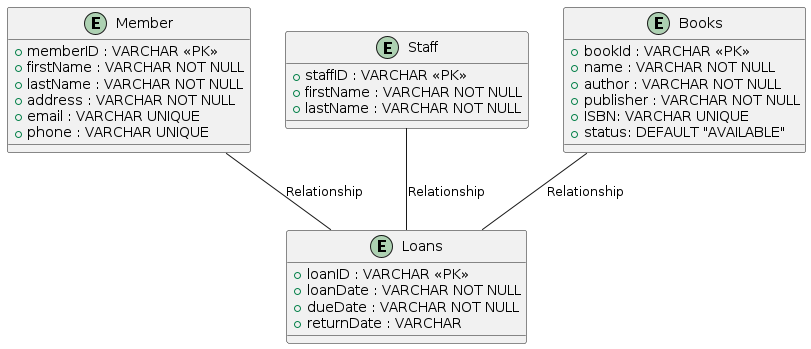

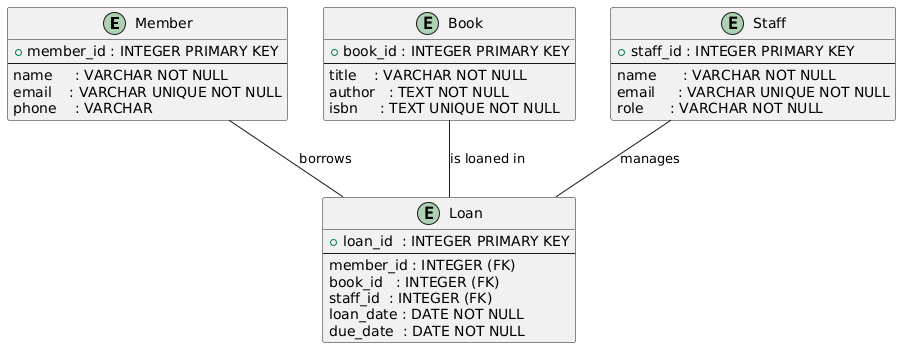

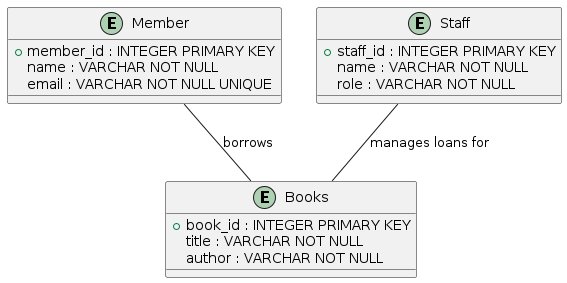

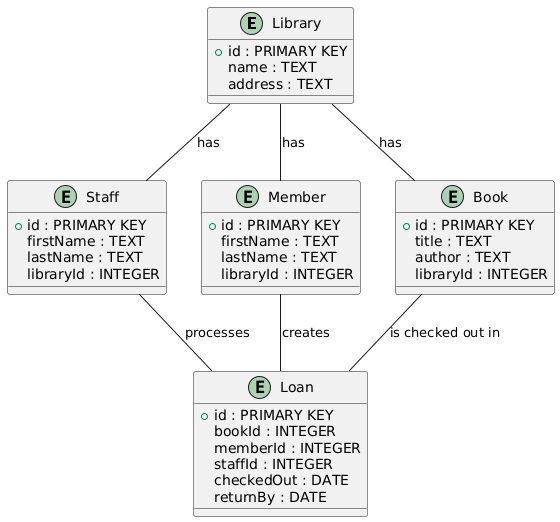

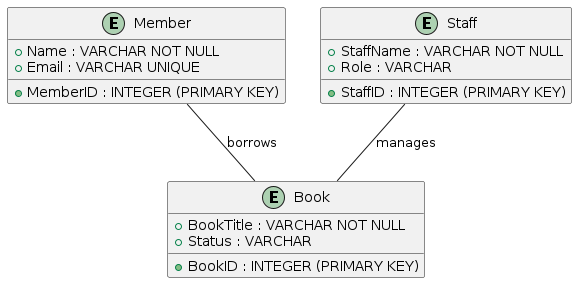

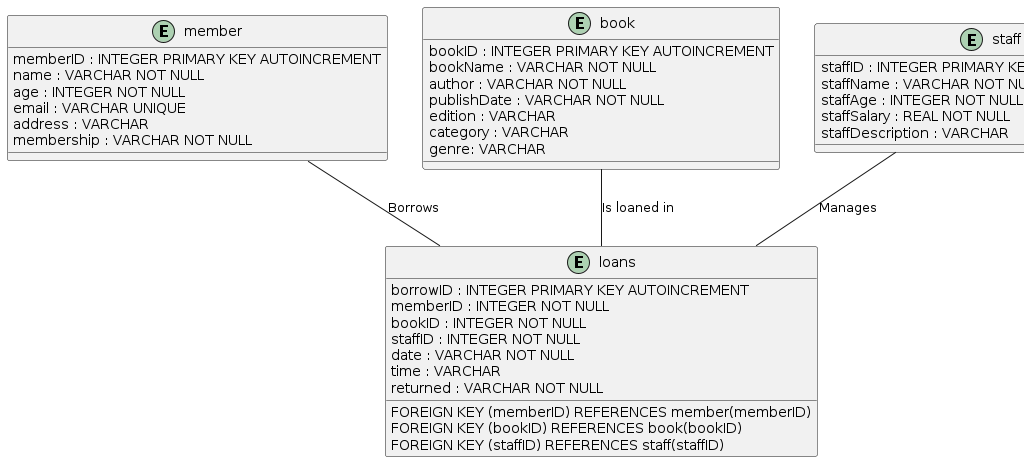

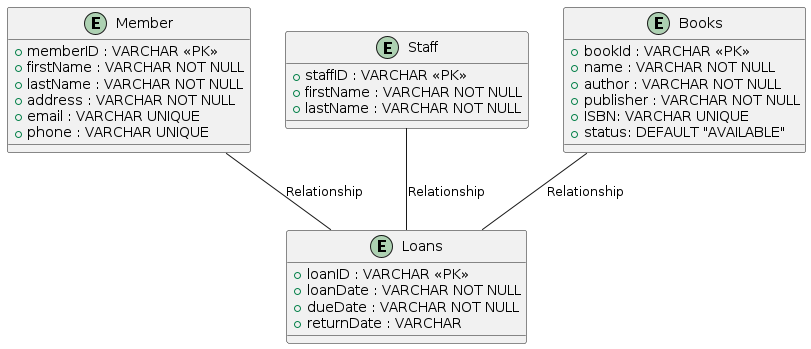

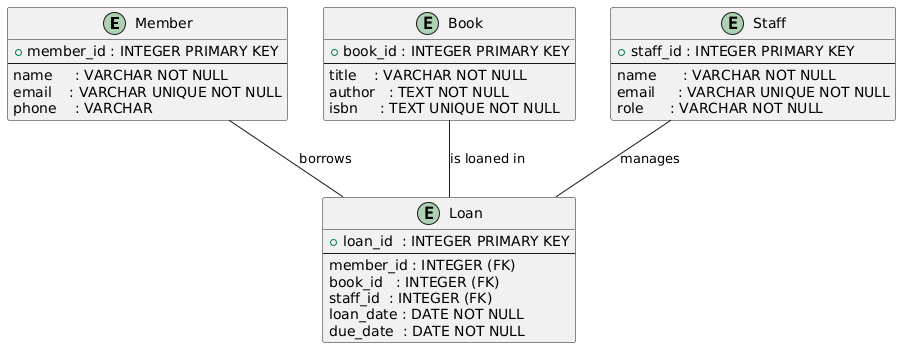

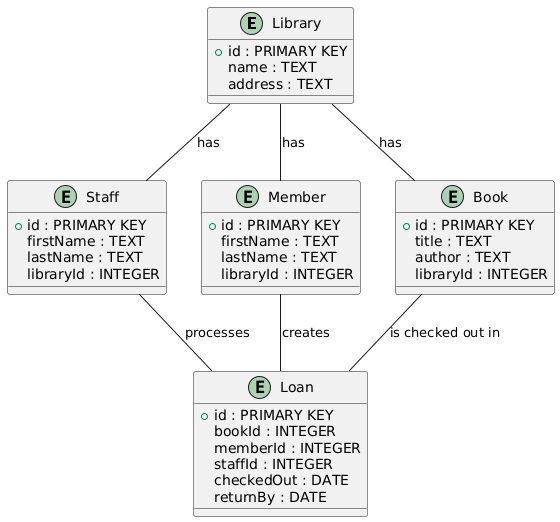

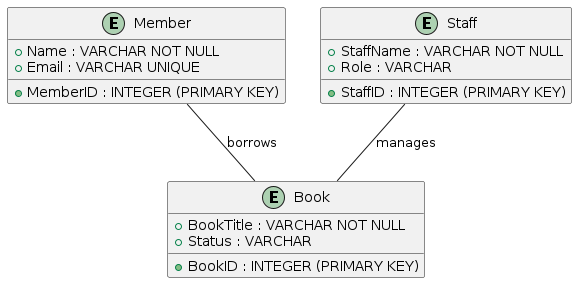

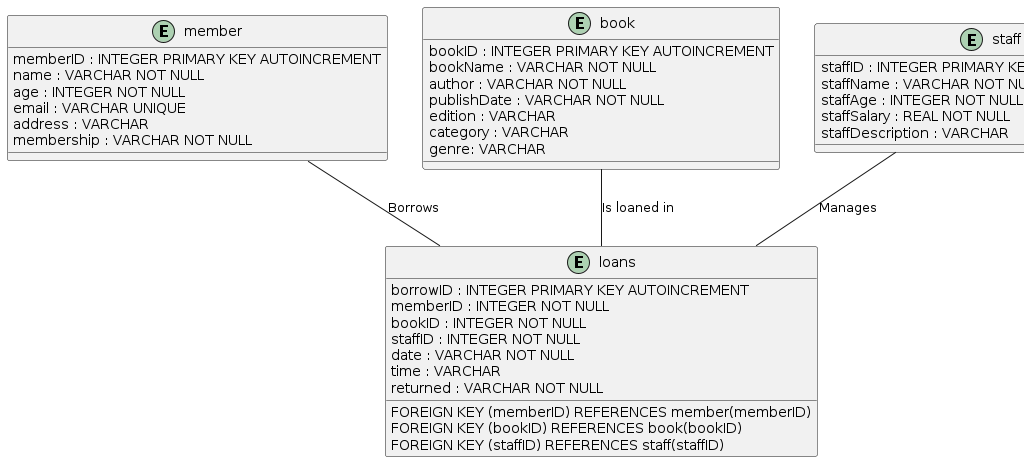

Lab 1: Library Database

Due: January 30, 11:59 PM

Goal: model, design, and implement a small relational database from a real-world

scenario.

Problem Scenario

A local library wants to track books loaned to members.

- Members can borrow multiple books

- Each book is borrowed by only one member at a time

- Library staff manage the loans

Step 1: Identify Entities

Entities are the things the system needs to track.

- Look for nouns in the scenario

- Ignore vague or irrelevant objects

- Each entity represents a table later

Entity Examples (Correct vs Incorrect)

| Good Entities | Bad Entities |

|---|---|

| Book | City |

| Member | Chair |

| Staff | Building |

Step 2: Identify Relationships

Relationships describe how entities are connected.

- Who borrows what?

- Who manages which action?

- Think in verbs, not nouns

Step 3: Create a UML-based ERD

- Use PlantUML to define entities

- Each entity needs at least 3 attributes

- Include a primary key

- Add data types and constraints

Output: libraryERD.png

ERD Structure Reminder

@startuml

entity "Book" {

+book_id: INTEGER

title: TEXT

isbn: TEXT

}

entity "Member" {

+member_id: INTEGER

name: TEXT

email: TEXT

}

Book -- Member: borrows

@endumlStep 4: Create Tables in SQLite

- Translate ERD into CREATE TABLE statements

- Use PRIMARY KEY and FOREIGN KEY

- Apply NOT NULL and UNIQUE where needed

Verify using the .schema command

Step 5: Perform CRUD Operations

| Operation | What You Do |

|---|---|

| Create | INSERT at least 3 rows |

| Read | SELECT all rows |

| Update | Change a value in one row |

| Delete | Remove one specific row |

What to Submit

- libraryERD.png: UML diagram

- librarySchema.png: SQLite .schema output

- libraryCrud.png: CRUD operation screenshots

This lab connects concepts → design → SQL implementation.

Homework 1: SQL Foundations

Due: January 30, 11:59 PM

Focus: conceptual understanding of SQL, SQLite, and course expectations.

Purpose of This Homework

This homework ensures you understand the role of SQL, the difference between language and system, and the scope of the course.

- No advanced syntax required

- Focus on ideas, not memorization

- Your own words matter

Required Reading

Please read Chapters 1 and 2 of the following books:

- SQL: The Complete Reference

- The Definitive Guide to SQLite

Goal: general understanding, not mastery of syntax.

Reading Guidance

- What problem does SQL solve?

- What role does a DBMS play?

- How data is created, queried, and changed

Do not worry about remembering every command.

Homework Questions (Conceptual)

- Why is SQL important?

- What is the difference between SQL and SQLite?

Answer in your own words. Short, clear explanations are preferred.

SQL Keywords to Explain

Explain the purpose of each keyword:

CREATESELECTUPDATEDELETEINSERT

What We Are Looking For

- What each keyword does conceptually

- When it is used

- No complex SQL examples required

Precision of thought matters more than syntax.

Syllabus Acknowledgment

Please read the course syllabus carefully:

- List any questions or points of clarification

Why the Syllabus Matters

- Defines course structure and expectations

- Explains grading and policies

- Clarifies responsibilities on both sides

Submitting this homework confirms that you reviewed the syllabus.

Submission Summary

- Answer all questions clearly

- Use your own words

- Submit via Canvas

This homework sets the foundation for everything that follows.

Module 2

Entity Relationship Model (ERM)

Agenda

- Requirements → entities

- Entities and relationships

- Cardinality: 1:1, 1:N, N:N

- Attributes, data types, constraints

- ERD → SQL schema

-- Work order:

-- 1) requirements

-- 2) ERD

-- 3) SQL schema

-- 4) queriesEvery Database Management System Starts with

Requirements

Requirement

The system should allow users to rent bikes easily

Requirement

The system should allow registered users to rent a bike by selecting a location, choosing an available bike, and completing payment through the application within three steps

Requirement

Purpose: Bike Rental Database System to manage rentals and track customers, bikes, and transactions

- Track availability of bikes, including unique id, condition, and location

- Log rental transactions, including bike id, customer id, start time, return time

- Maintain customer records, including name, contact details, rental history

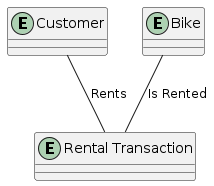

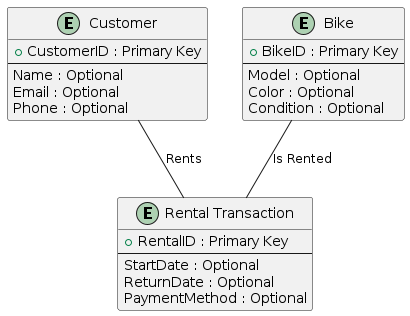

Translate Requirements → Model

- Underline nouns → candidate entities

- Underline verbs → candidate relationships

- Details become attributes

-- Nouns: user, bike, location, payment, transaction

-- Verbs: rent, select, choose, pay

-- Candidate entities:

-- - Customer

-- - Bike

-- - Location

-- - Payment

-- - RentalTransactionEntities

- Real world objects or concepts

- Usually nouns

- Later implemented as tables

-- Entity candidates:

-- Customer

-- Bike

-- RentalTransaction

-- Location

-- PaymentRelationships

- Connections between entities

- Usually verbs

- Shown as lines in ERD

-- Relationship examples:

-- Customer rents Bike

-- Bike located at Location

-- Customer pays PaymentQuick Check

- Question: "Customer rents a Bike"

- Is it an entity or a relationship?

-- ERD view:

-- Customer --(rents)--> BikeQuick Check

- Question: "Bike"

- Is it an entity or a relationship?

-- Entity will have attributes:

-- BikeID, Model, Color, Condition, AvailabilityName Rules

- Conceptually, ERD names can overlap

- Technically, table names must be unique

- Use consistent casing

-- Prefer consistent names:

-- Customer, Bike, RentalTransaction

-- Avoid reserved words for table namesCase Sensitivity

- Conceptually, case does not matter in ERD

- Technically, it depends on DB and OS

-- Safe habit:

-- Pick a naming style and keep it.

-- Example: PascalCase tables, snake_case columnsRequirement

A travel booking system should allow users to search for, compare, and book flights, hotels, and rental cars. Users must be able to filter results by price, date, and location, and securely complete payments

Requirement (highlight nouns/verbs)

A travel booking system should allow users to search for, compare, and book flights, hotels, and rental cars. Users must be able to filter results by price, date, and location, and securely complete payments.

Text → Visual

- Text is good for brainstorming

- ERD is good for precision and review

- ERD is easier to validate as a group

-- Practice:

-- turn nouns into entity boxes

-- connect boxes with verb labels- Conceptual model of the database

- Shows entities and their attributes

- Illustrates relationships between entities

- Independent of any database system

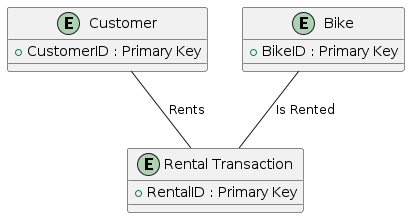

Attributes

- Entities are not enough

- Each entity has a list of attributes

- Attributes become columns

-- Customer attributes:

-- CustomerID, Name, Email, Phone

-- Bike attributes:

-- BikeID, Model, Color, Condition, AvailabilityPrimary Key Attribute

- Mandatory technical attribute

- Unique per row

- Often integer

-- Primary key examples:

-- Customer(CustomerID)

-- Bike(BikeID)

-- RentalTransaction(RentalID)- Each entity includes a primary key

- Primary key uniquely identifies each record

- Primary key cannot be NULL

- Primary key remains stable over time

Optional Attributes

- Extra details keeps the system useful

- Not needed to identify the entity

- Often nullable

-- Optional examples:

-- Customer.Email, Customer.Phone

-- Bike.Color, Bike.Condition

-- RentalTransaction.ReturnDate- Entities can have multiple descriptive attributes

- Attributes store detailed information

- Not all attributes are required for identification

- Attributes refine the meaning of an entity

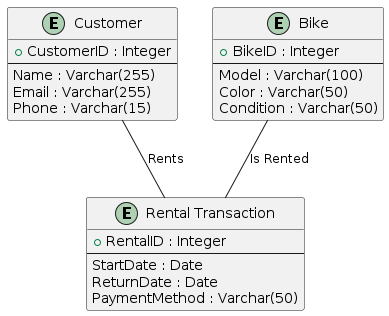

Data Types

- Each attribute has a data type

- Data types impact validation and storage

- Keep types aligned across layers

-- BookingDate DATE

-- Availability BOOLEAN

-- Name VARCHAR(100)- Each attribute is assigned a data type

- Data types define how values are stored

- Correct types prevent invalid data

- Data types must align with application logic

SQL Standard Data Types

| Data Type | Description | Example |

|---|---|---|

| INTEGER | A whole number, no decimal points | 42 |

| BIGINT | A large integer value | 9223372036854775807 |

| DECIMAL(p, s) | Exact numeric value | DECIMAL(10, 2) |

| FLOAT | Approximate numeric value | 3.14159 |

| CHAR(n) | Fixed length string | CHAR(5) |

| VARCHAR(n) | Variable length string | VARCHAR(50) |

| TEXT | Variable length text | "long notes" |

| DATE | Date | 2025-01-14 |

| TIME | Time | 14:30:00 |

| TIMESTAMP | Date and time | 2025-01-14 14:30:00 |

| BOOLEAN | TRUE or FALSE | TRUE |

| BLOB | Binary data | [bytes] |

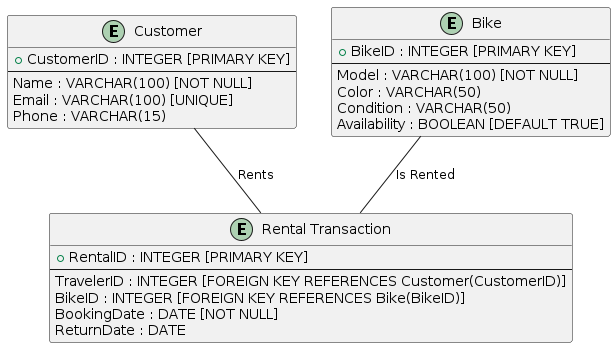

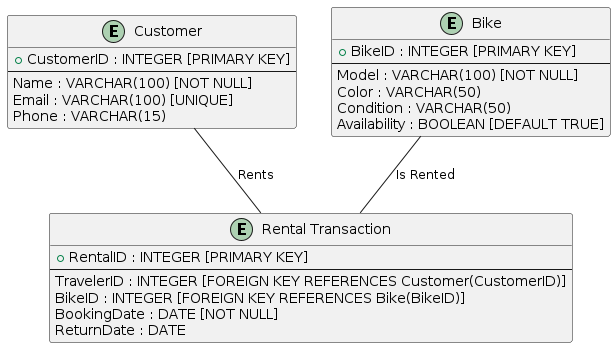

Constraints

- Rules that protect the database

- Improve correctness

- Make errors visible early

-- Common constraints:

-- PRIMARY KEY

-- FOREIGN KEY

-- NOT NULL

-- UNIQUE

-- DEFAULT- Constraints enforce data correctness

- Primary keys ensure uniqueness

- NOT NULL prevents missing values

- UNIQUE avoids duplicate data

SQL Attribute Constraints

| Constraint | Description |

|---|---|

| PRIMARY KEY | Uniquely identifies each record; implies NOT NULL |

| FOREIGN KEY | Ensures referential integrity by linking to another table |

| NOT NULL | Column cannot be NULL |

| UNIQUE | All values in a column are distinct |

| DEFAULT | Default value when no value is provided |

| AUTO_INCREMENT | Auto generates unique integer id (DB specific) |

Conceptual vs Technical (SQL) Terms

| Conceptual Term | Technical Term |

|---|---|

| Diagram | Schema |

| Entity | Table |

| Attribute | Column |

| Record | Row |

| Identifier | Primary Key |

| Relationship | Foreign Key |

| Optional Attribute | Nullable Column |

| Mandatory Attribute | NOT NULL Column |

From ERD to Database Schema

CREATE TABLE Customer (

CustomerID INTEGER PRIMARY KEY AUTOINCREMENT,

Name VARCHAR(100) NOT NULL,

Email VARCHAR(100) UNIQUE,

Phone VARCHAR(15)

);

CREATE TABLE Bike (

BikeID INTEGER PRIMARY KEY AUTOINCREMENT,

Model VARCHAR(100) NOT NULL,

Color VARCHAR(50),

Condition VARCHAR(50),

Availability BOOLEAN DEFAULT TRUE

);

CREATE TABLE RentalTransaction (

RentalID INTEGER PRIMARY KEY AUTOINCREMENT,

CustomerID INTEGER NOT NULL,

BikeID INTEGER NOT NULL,

BookingDate DATE NOT NULL,

ReturnDate DATE,

FOREIGN KEY (CustomerID) REFERENCES Customer(CustomerID),

FOREIGN KEY (BikeID) REFERENCES Bike(BikeID)

);

Misaligned Data Types

- Same data, different types across layers

- Causes validation failures and data loss

- DB rejects invalid formats

-- Example risk:

-- UI collects phone as text "+1-123-456"

-- DB column INTEGER

-- Insert fails or loses formattingExamples of Misaligned Data Types

| Front-End | Back-End | Database | Result |

|---|---|---|---|

| Text input for phone number | String validation for format | INTEGER type | Formatted numbers rejected |

| Dropdown allows decimal selection | Expects integer values | FLOAT type | Unexpected decimal input |

| Date picker with local format | Assumes ISO format | DATE type | Date conversion errors |

ERD Quiz (1/3)

- Question: What does an entity represent?

- A. A database table

- B. A real world object or concept

- C. A relationship between tables

- D. A data attribute

-- Entity → table later

-- Example: Customer, BikeERD Quiz (2/3)

- Question: What is the purpose of a relationship?

- A. To store data

- B. To define data types

- C. To show how entities connect

- D. To create unique identifiers

-- Relationship example:

-- Customer rents BikeERD Quiz (3/3)

- Question: What is the purpose of a primary key?

- A. Allow duplicate rows

- B. Uniquely identify each row

- C. Establish relationships

- D. Enforce data types

-- Primary key column:

-- CustomerID INTEGER PRIMARY KEYHow to Model Relationships in ERD?

Cardinality matters: 1:1, 1:N, N:N

1 Entity

0 Relationship

2 Entity

1 Relationship

3 Entity

2 Relationship

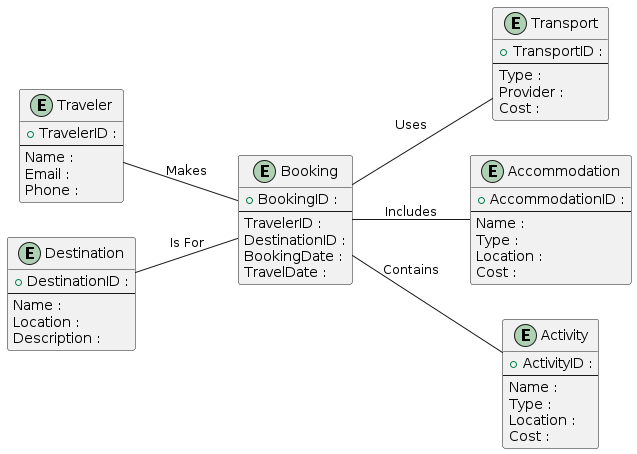

- Travel booking application domain

- Users search and compare options

- Bookings include flights, hotels, and rental cars

- Attributes capture price, date, and location

Why Minimum Relationships?

- Performance: less joins

- Simplicity: easier to maintain

- Clarity: easier to explain and test

- Consistency: less places for mismatch

-- Design tip:

-- prefer the simplest ERD that meets requirements

-- add complexity only when neededRelationship Types (Cardinality)

| Cardinality | Meaning | Example |

|---|---|---|

| 1:1 | Each record links to one record | User and Profile |

| 1:N | One record links to many records | Customer and Rentals |

| N:N | Many records link to many records | Students and Courses |

When to Use Relationship Type?

| Cardinality | When to Use | Example |

|---|---|---|

| 1:1 | Each record in one table corresponds to exactly one record in another table | User and Profile |

| 1:N | One record can be associated with multiple records in another table | Library and Books |

| N:N | Multiple records in both tables can be related to each other | Students and Courses |

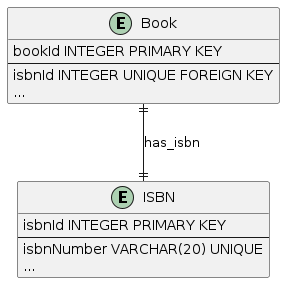

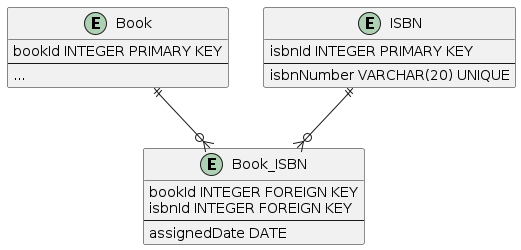

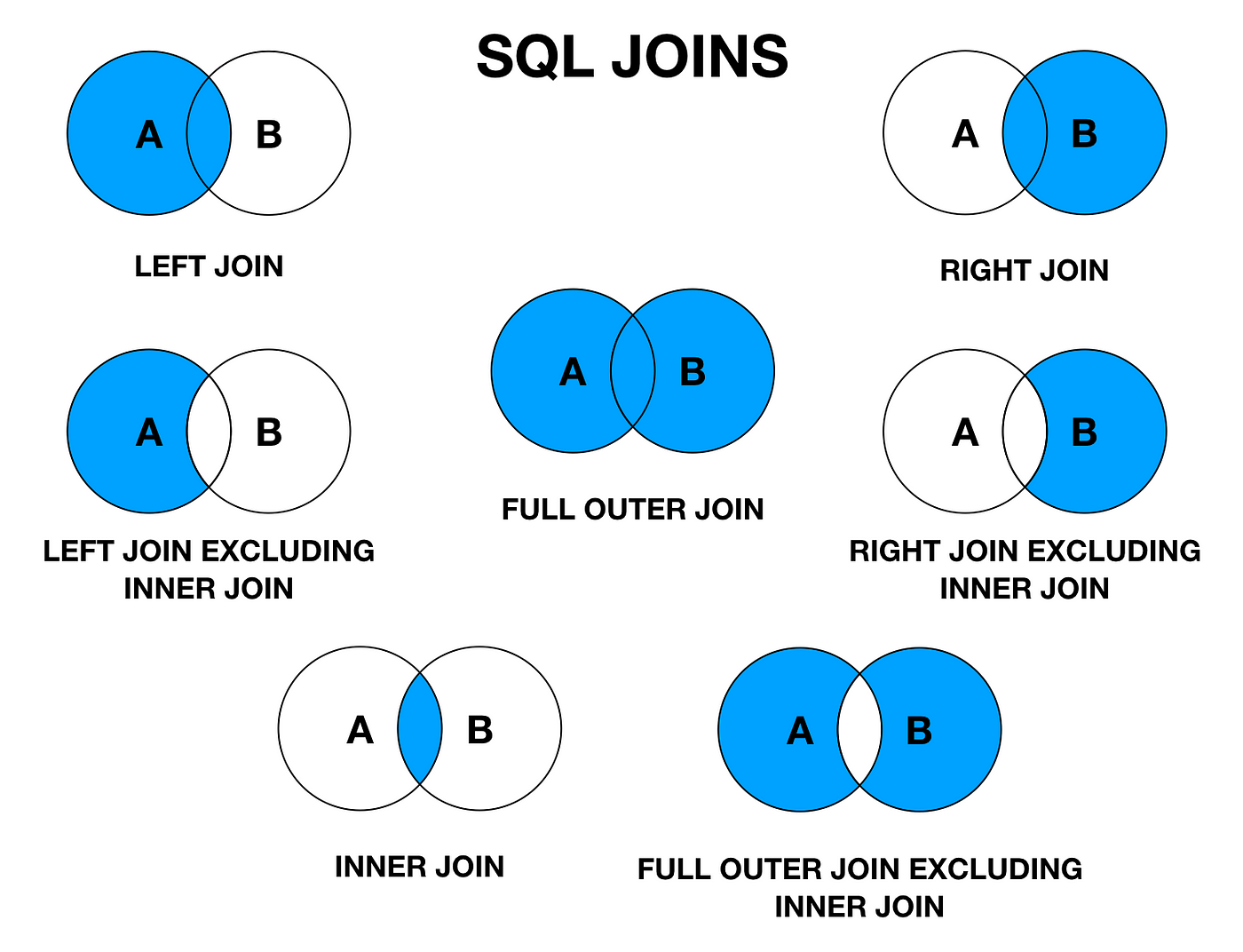

Querying Relationships

- One relationship → one join

- More relationships → more joins

- Start from the filtering table

SELECT Book.title

FROM Book

WHERE Book.isbnId = '978-1-';

SELECT Book.title

FROM Book, ISBN

WHERE Book.bookId = ISBN.bookId

AND ISBN.isbnId = '978-1-';

SELECT Book.title

FROM Book, ISBN, Book_ISBN

WHERE Book.bookId = Book_ISBN.bookId

AND ISBN.isbnId = Book_ISBN.isbnId

AND ISBN.isbnId = '978-1-';Which Relationship?

| # | Scenario | Which Cardinality? |

|---|---|---|

| 1 | Book and ISBN | |

| 2 | Author and Book | |

| 3 | Student and borrow Book | |

| 4 | Student and Student ID | |

| 5 | Book and Publisher | |

| 6 | Publisher and Books | |

| 7 | Library and Book | |

| 8 | Book and Format (e.g., eBook, hardcover) | |

| 9 | Book and Catalog Number in the Library System | |

| 10 | Reader and Book Review |

Module 2 Recap

- Requirements drive the model

- Entities (nouns) become tables

- Relationships (verbs) connect entities

- Attributes need types and constraints

- ERD becomes SQL schema

-- Next step:

-- use your ERD to justify every CREATE TABLE line- Draft an ERD for a bike rental store

- Identify core entities (e.g., Bike, Customer, Rental)

- List key attributes for each entity

- Mark primary key attributes

- Decide which entities are connected

@startuml

entity "EntityA" as A {

+id: INTEGER

attribute_1: VARCHAR

attribute_2: INTEGER

}

entity "EntityB" as B {

+id: INTEGER

attribute_1: VARCHAR

}

A -- B: "relationship"

@enduml

Case Study

Furniture Store Database

Requirements → ERM → Tables → Data

Agenda

- Gather requirements

- Extract entities and relationships

- Build ERM (PlantUML)

- Convert to SQL tables

- Insert sample data

- Query and maintain data

-- Work order:

-- 1) requirements

-- 2) ERM / ERD

-- 3) schema

-- 4) data

-- 5) queriesRequirement

The store should sell furniture products to customers and record each order.

Requirement

Each order can include multiple products with quantities and prices at the time of purchase.

Requirement

The store should track inventory per product, so staff know what is in stock and what needs reordering.

Requirements

- One store location (for now)

- Basic customer info

- Orders with line items

- Inventory count per product

-- Design choice:

-- Keep the first version small.

-- Add delivery, returns, warehouses later.Nouns → Entities

- Customer

- Product

- Order

- OrderItem

- Inventory

-- Minimal set of tables:

-- 1) Product (includes inventory count)

-- 2) Customer

-- 3) CustomerProductSales (captures sales rows with two FKs)Key Modeling Decision

- We want max 3 tables

- Orders still need multiple items

- We store each sale as a row with two foreign keys

-- Tradeoff:

-- Normalized: Order + OrderItem (best design)

-- Constrained: store each sale inCustomerProductSalesWhat we lose with 3 tables

- No separate order header (shipping, payment, etc.)

- Harder to model one order with many line items as a single unit

- Still acceptable for a first prototype and class demo

Relationships (conceptual)

- Customer buys Products (N:N)

- Sales rows store qty and unit_price at purchase time

- Product stock quantity (1:1 inventory)

-- Conceptual ERM:

-- Customer N:N Product (via CustomerProductSales)

-- Product carries stock columns (qty_on_hand, reorder_level)ERM Diagram (PlantUML)

- 3 tables only

- Sales captured as rows with 2 foreign keys

- Still shows true business relationships

@startuml

hide circle

skinparam linetype ortho

skinparam shadowing false

entity "Customer" as Customer {

+customer_id: INTEGER

--

name: VARCHAR

email: VARCHAR

phone: VARCHAR

}

entity "Product" as Product {

+product_id: INTEGER

--

sku: VARCHAR

name: VARCHAR

category: VARCHAR

price: DECIMAL

qty_on_hand: INTEGER

reorder_level: INTEGER

}

entity "CustomerProductSales" as CustomerProductSales {

+sale_id: INTEGER

--

customer_id: INTEGER (FK)

product_id: INTEGER (FK)

sale_date:DATE

qty :INTEGER

unit_price: DECIMAL

status :VARCHAR

}

Customer ||--o{ CustomerProductSales: buys

Product ||--o{ CustomerProductSales: sold_in

@endumlSales row format

- Each row = one purchased product

- Records customer_id and product_id (two FKs)

- Captures qty and unit_price at purchase time

-- Example row meaning:

-- customer 1 bought product 5

-- qty = 2 at unit_price = 89.00 on 2026-01-10Primary Keys

- Customer: customer_id

- Product: product_id

- CustomerProductSales: sale_id

-- Primary keys are stable identifiers.

-- They should not carry meaning.Constraints (simple)

- sku UNIQUE

- qty_on_hand NOT NULL DEFAULT 0

- Sales.customer_id must exist

- Sales.product_id must exist

-- Constraints catch errors early.

-- They protect data quality.From ERM → Tables

- Entities become tables

- Attributes become columns

- Relationships become foreign keys

-- Customer ||--o{ CustomerProductSales

-- FK: CustomerProductSales.customer_id → Customer.customer_id

-- FK: CustomerProductSales.product_id → Product.product_idTables Overview (3 only)

| Table | Purpose | Key Columns |

|---|---|---|

| Customer | Who buys | customer_id (PK), email (UNIQUE) |

| Product | What is sold and stock count | product_id (PK), sku (UNIQUE), qty_on_hand |

| CustomerProductSales | Sales rows (customer ↔ product) | sale_id (PK), customer_id (FK), product_id (FK) |

Schema: Customer

- Keep identity separate from email

- Email is unique but can change

- Phone stored as text

CREATE TABLE Customer (

customer_id INTEGER PRIMARY KEY AUTOINCREMENT,

name VARCHAR(100) NOT NULL,

email VARCHAR(100) UNIQUE,

phone VARCHAR(30)

);Schema: Product

- sku is a business identifier (unique)

- qty_on_hand defaults to 0

- reorder_level helps restocking

CREATE TABLE Product (

product_id INTEGER PRIMARY KEY AUTOINCREMENT,

sku VARCHAR(40) NOT NULL UNIQUE,

name VARCHAR(120) NOT NULL,

category VARCHAR(60),

price DECIMAL(10,2) NOT NULL,

qty_on_hand INTEGER NOT NULL DEFAULT 0,

reorder_level INTEGER NOT NULL DEFAULT 0

);Schema: CustomerProductSales

- References Customer and Product

- Stores qty and unit_price per purchase

- Status kept simple for demo

CREATE TABLE CustomerProductSales (

sale_id INTEGER PRIMARY KEY AUTOINCREMENT,

customer_id INTEGER NOT NULL,

product_id INTEGER NOT NULL,

sale_date DATE NOT NULL,

status VARCHAR(30) NOT NULL DEFAULT 'NEW',

qty INTEGER NOT NULL,

unit_price DECIMAL(10,2) NOT NULL,

FOREIGN KEY (customer_id) REFERENCES Customer(customer_id),

FOREIGN KEY (product_id) REFERENCES Product(product_id)

);Sample Data Plan

- 2 customers

- 6 products

- Sales rows represent purchases

- Multiple rows can share the same sale_date

-- Insert order:

-- 1) Customer

-- 2) Product

-- 3) CustomerProductSalesInsert Customers

- Keep it realistic

- Email optional but unique when present

INSERT INTO Customer (name, email, phone) VALUES

('Ava Kim', 'ava.kim@example.com', '209-555-0101'),

('Tom Patel', 'tom.patel@example.com', '209-555-0112');Insert Products (part 1)

- sku is the business identifier

- qty_on_hand reflects current stock

INSERT INTO Product (sku, name, category, price, qty_on_hand, reorder_level) VALUES

('SOFA-1001', 'Linen Sofa 3 Seat', 'Sofa', 899.00, 5, 2),

('TBLE-2001', 'Oak Dining Table', 'Table', 749.00, 2, 1),

('CHAI-3001', 'Walnut Accent Chair', 'Chair', 299.00, 7, 3);Insert Products (part 2)

- Mix high and low cost items

- Reorder level supports planning

INSERT INTO Product (sku, name, category, price, qty_on_hand, reorder_level) VALUES

('DSKR-4001', 'Standing Desk', 'Desk', 499.00, 3, 1),

('LAMP-5001', 'Floor Lamp', 'Lighting', 89.00, 12, 5),

('RUGG-6001', 'Wool Rug 5x8', 'Rug', 179.00, 4, 2);Create Sales

- Each row captures one purchased product

- unit_price captured at purchase time

- Multiple rows on same date can represent one "order"

INSERT INTO CustomerProductSales (customer_id, product_id, sale_date, status, qty, unit_price)

VALUES

(1, 1, '2026-01-10', 'PAID', 1, 899.00),

(1, 5, '2026-01-10', 'PAID', 2, 89.00);Create Sales (more)

- Multiple items represented as multiple rows

- Different customer

INSERT INTO CustomerProductSales (customer_id, product_id, sale_date, status, qty, unit_price)

VALUES

(2, 2, '2026-01-11', 'NEW', 1, 749.00),

(2, 3, '2026-01-11', 'NEW', 2, 299.00),

(1, 6, '2026-01-12', 'PAID', 1, 179.00),

(1, 4, '2026-01-12', 'PAID', 1, 499.00);Read: List Products

- Start with simple SELECT

- Show inventory and reorder level

SELECT product_id, sku, name, price, qty_on_hand, reorder_level

FROM Product

ORDER BY category, price DESC;Stock Check Rule

| Condition | Meaning | Action |

|---|---|---|

| qty_on_hand = 0 | Out of stock | Block purchase |

| qty_on_hand ≤ reorder_level | Low stock | Restock soon |

| qty_on_hand > reorder_level | Healthy stock | No action |

Read: Low Stock Report

- Common operational query

- Used by staff daily

SELECT sku, name, qty_on_hand, reorder_level

FROM Product

WHERE qty_on_hand <= reorder_level

ORDER BY qty_on_hand ASC;Read: Orders per Customer

- Simple join (Customer → Sales)

- Counts sales rows, shows totals

SELECT c.name,

COUNT(s.sale_id) AS sale_rows,

SUM(s.qty * s.unit_price) AS total_spent

FROM Customer c

JOIN CustomerProductSales s ON s.customer_id = c.customer_id

GROUP BY c.customer_id

ORDER BY total_spent DESC;Update: Restock Inventory

- Staff receives shipment

- Increase qty_on_hand

UPDATE Product

SET qty_on_hand = qty_on_hand + 10

WHERE sku = 'TBLE-2001';Update: Price Change

- Price changes over time

- Sales keep old unit_price per row

UPDATE Product

SET price = 799.00

WHERE sku = 'SOFA-1001';Update: Sale Status

- Sale rows move through states

- NEW → PAID → FULFILLED

UPDATE CustomerProductSales

SET status = 'FULFILLED'

WHERE sale_id = 1;Delete: Remove a Test Sale

- Common during development

- Only delete safe rows

DELETE FROM CustomerProductSales

WHERE sale_id = 2

AND status = 'NEW';CRUD Summary

| Goal | SQL | Example |

|---|---|---|

| Read data | SELECT | List low stock products |

| Add data | INSERT | Create new sales rows |

| Change data | UPDATE | Restock inventory |

| Remove data | DELETE | Delete a test sale |

Data Quality Check

- Never allow negative stock

- Never allow negative prices

- Use constraints or checks when available

-- Guard rails:

-- qty_on_hand >= 0

-- price > 0

-- qty > 0

-- valid status valuesWhy 3 tables is a good start?

- Shows minimum viable schema

- Makes tradeoffs explicit

- Sets up normalization discussion

-- Next iteration:

-- Add Order and OrderItem tables

-- Group line items under an order_id

-- Stronger constraints + joinsOptional Extension (later)

- Delivery address per order

- Payments table

- Warehouses and multi location stock

- Returns and refunds

-- Grow the model only when needed.

-- Keep the first version stable.Recap

- Requirements define scope

- ERM clarifies entities and relationships

- Tables implement the model

- Data and CRUD prove the design works

-- Habit:

-- justify each column with a requirementPractice

Modify requirements and update the ERM and tables

- Add delivery support

- Add returns

- Add multiple warehouses

- Replace items_json with OrderItem table

Module 3

Relational Algebra

Edgar F. Codd

- Born: August 19, 1923

- Known for: Relational Database Model

- Key Contribution: Relational Algebra (1970)

- Impact: Foundation of SQL and modern databases

- Award: ACM Turing Award (1981)

Today

- Practice Questions

- Set Theory

- Relational Algebra

- Core Operations

- Advanced Operations

- Optimization and Efficiency

- Application Use Case

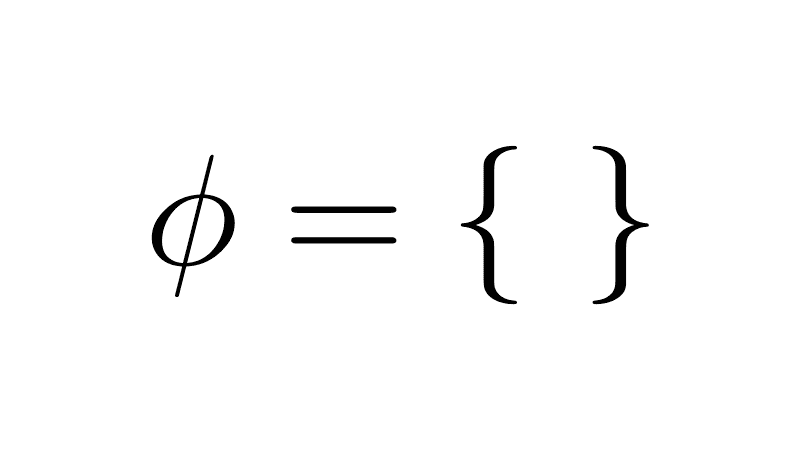

Georg Cantor: Founder of Set Theory

- No concept of "set"

- No way to guarantee uniqueness

- Only lists or bags

- Duplicates unavoidable

- No math for collections

items = []

items.append("chair")

items.append("table")

items.append("chair") # duplicate allowed!

print(items) # ['chair', 'table', 'chair']Automatic uniqueness

No duplicates possible

s = set()

s.add("chair")

s.add("table")

s.add("chair") # ignored!

print(s) # {'chair', 'table'}What is a Set?

Order does not matter.

Chronology of Set Operations

- Union (∪) - 1873 - Georg Cantor

- Intersection (∩) - 1873 - Georg Cantor

- Difference (−) - 1873 - Georg Cantor

- Complement (ᶜ) - 1888 - Richard Dedekind

- Logic operators (∨, ∧, ¬) - 1879–1890s - Frege / Peirce

Set Operators and Logical Operators

- Union (∪) ↔ OR (∨)

- Intersection (∩) ↔ AND (∧)

- Complement (ᶜ) ↔ NOT (¬)

- Difference (−) ↔ AND NOT (∧¬)

Sets:

A = {1, 2}

B = {2, 3}

A ∪ B = {1, 2, 3}

A ∩ B = {2}

Logic:

P(x): x ∈ A

Q(x): x ∈ B

P(x) ∨ Q(x): true for 1,2,3

P(x) ∧ Q(x): true for 2

¬P(x): true for 3,4

s = {"chair", "table"}

print(len(s)) # 2

s.add("chair") # ignored

s.add("desk") # added

s.add("chair") # ignored again

print(len(s)) # still 3

print(s) # {'chair', 'table', 'desk'}CREATE TABLE Furniture (

id INTEGER PRIMARY KEY AUTOINCREMENT,

item VARCHAR(50) UNIQUE

);

INSERT INTO Furniture (item) VALUES ('chair'), ('table');

-- Duplicate item → raises exception

INSERT INTO Furniture (item) VALUES ('chair');

-- Error: UNIQUE constraint failed: Furniture.item

-- Using OR IGNORE to mimic set (duplicates ignored)

INSERT OR IGNORE INTO Furniture (item) VALUES ('chair');

INSERT OR IGNORE INTO Furniture (item) VALUES ('desk');

INSERT OR IGNORE INTO Furniture (item) VALUES ('chair');

SELECT COUNT(*) FROM Furniture; -- 3

Set = {chair, table, lamp} with no duplicates

const furniture = new Set([

"chair",

"table",

"lamp"

]);Set<String> furniture = new HashSet<>();

furniture.add("chair");

furniture.add("table");

furniture.add("lamp");furniture = {"chair", "table", "lamp"}CREATE TABLE Furniture (

item INTEGER PRIMARY KEY

);

INSERT INTO Furniture VALUES

('chair'), ('table'), ('lamp');Set Theory: Basic Concept & Use Cases (~1870–1890)

- Set: collection of things

- No duplicates allowed

- Union: combine sets

- Intersection: find common

- Difference: remove one

- Compare set sizes

- Organize & count clearly

- Crop yield forecasting data

- Land parcel boundary mapping

- Soil type classification

- Weather pattern analysis

- Farm census comparisons

- Infinite resource allocation

- Plant breeding statistics

Set Theory: Core Ideas

- Developed by Georg Cantor (late 19th century)

- No duplicates: ensures clarity

- Key operations: union, intersection, difference

- Foundation for relational algebra

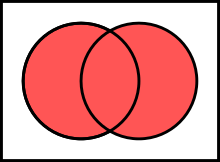

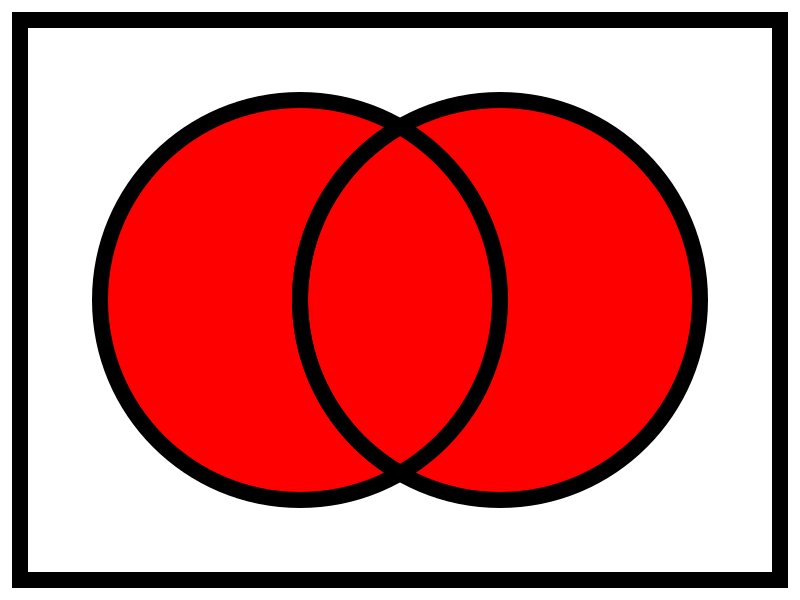

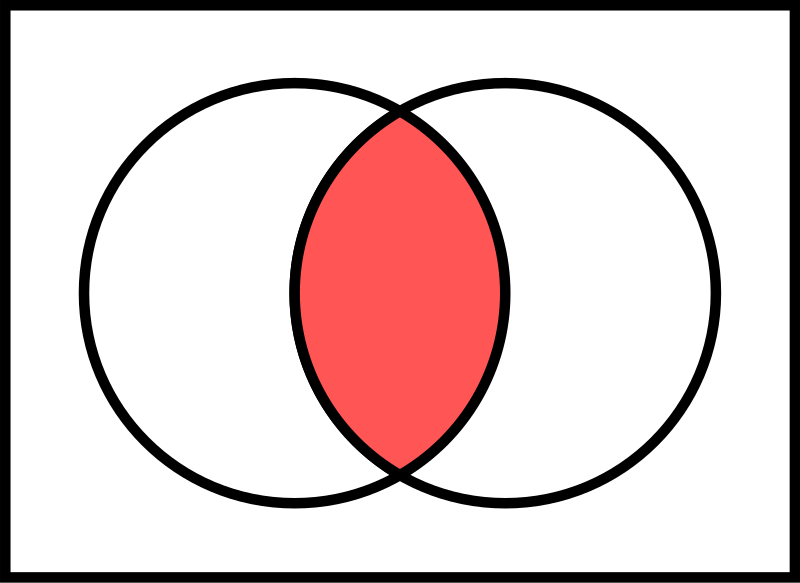

Set Operations Summary

| Operation | Symbol | Description | Diagram |

|---|---|---|---|

| Union | ⋃ | All unique elements |  |

| Intersection | ∩ | Common elements only |  |

| Difference | − | In A but not in B |  |

Set Theory Concepts: Visual Overview

What is a Tuple?

Ordered sequence of elements

- (1, 'Trek', 'Mountain', 1200)

- (1, 'Trek', 'Mountain', 1200)

- (1, 'Trek', 'Mountain', 1200)

- (2, 'Giant', 'Road', 1500)

- (3, 'Cannondale', 'Hybrid', 1000)

Bicycles relation:

bikeId | brand | type | price

1 | Trek | Mountain | 1200 ← tuple 1

1 | Trek | Mountain | 1200 ← tuple 1

1 | Trek | Mountain | 1200 ← tuple 1

2 | Giant | Road | 1500 ← tuple 2

3 | Cannondale | Hybrid | 1000 ← tuple 3

Each row = one tuple (ordered sequence of elements)Relation = Set of Tuples

- No duplicate rows

- All tuples have the same attributes

- Example schema: Bikes(bikeId, brand, type, price)

Bikes relation (a set of tuples):

bikeId | brand | type | price

1 | Trek | Mountain | 1200

2 | Giant | Road | 1500

3 | Cannondale | Hybrid | 1000

• Each row = one tuple

• No duplicate rows allowed

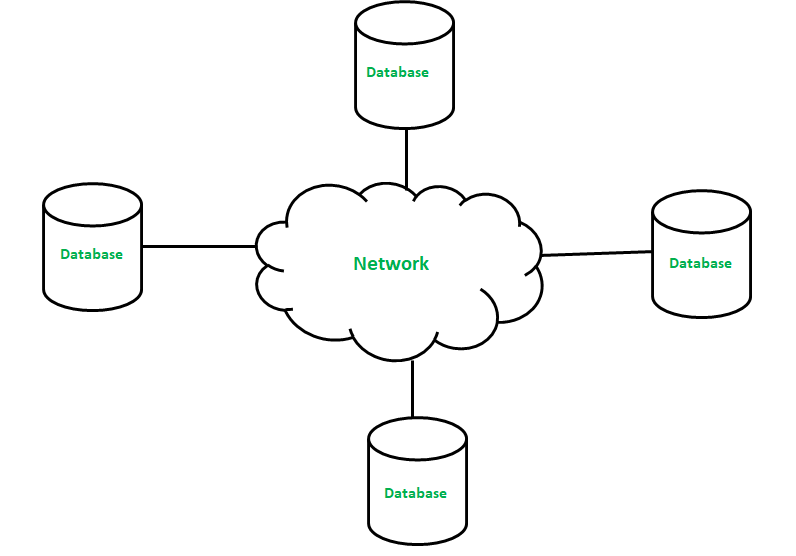

• All rows share the same columns (attributes)Relational Algebra Flow

(Set of Tuples)

(Set of Tuples)

Relational Algebra Operators

Selection (σ)

- Filters rows based on condition

- Reduces number of tuples

- Example: σ(price < 1200)(Bicycles)

Input Bicycles:

bikeId | brand | type | price

1 | Trek | Mountain | 1699

2 | Giant | Road | 1100

3 | Cannondale | Hybrid | 999

After σ(price < 1200):

bikeId | brand | type | price

2 | Giant | Road | 1100

3 | Cannondale | Hybrid | 999Projection (π)

- Selects specific columns

- Removes unwanted attributes & duplicates

- Example: π(brand, price)(Bicycles)

Input:

bikeId | brand | model | price | color

1 | Trek | Roscoe 7 | 1699 | Blue

2 | Giant | Contend | 1299 | Black

3 | Trek | Marlin 5 | 799 | Red

After π(brand, price):

brand | price

Trek | 1699

Giant | 1299

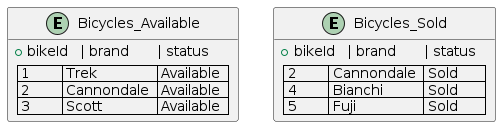

Trek | 799Union (⋃)

- Combines two compatible relations

- Removes duplicates

- Example: Available ⋃ Sold

Available:

bikeId | brand

1 | Trek

2 | Giant

Sold:

bikeId | brand

2 | Giant

4 | Scott

After Union:

bikeId | brand

1 | Trek

2 | Giant

4 | ScottSet Difference (−)

- Tuples in first but not in second

- Example: Stock − Sold

Stock:

bikeId | brand

1 | Trek

2 | Giant

3 | Cannondale

Sold:

bikeId | brand

2 | Giant

After Difference:

bikeId | brand

1 | Trek

3 | CannondaleCartesian Product (×)

- All possible combinations of tuples

- Example: Bikes × Stores

Bikes (2 rows) × Stores (2 rows) = 4 rows

Bikes: Stores:

1 Trek A Berlin

2 Giant B Munich

Result:

bikeId | brand | store | city

1 | Trek | A | Berlin

1 | Trek | B | Munich

2 | Giant | A | Berlin

2 | Giant | B | MunichNatural Join (⨝)

- Cartesian + equality on shared attribute names

- Example: Orders ⨝ Customers

Orders: Customers:

orderId | custId | amt custId | name

101 | 5 | 299 5 | Alice

102 | 7 | 450 7 | Bob

After Natural Join:

orderId | custId | amt | name

101 | 5 | 299 | Alice

102 | 7 | 450 | BobDivision (÷)

- Tuples in left that match ALL values in right

- Example: Students ÷ RequiredCourses

Enrollments: Required:

student | course course

A | Math Math

A | Physics Physics

B | Math

After Division (students who took ALL required):

student

ARenaming (ρ)

- Renames relation or attributes

- Example: ρ(Inv)(Bicycles)

Before:

bikeId | brand | price

After ρ(Inv ← Bicycles):

Inv.bikeId | Inv.brand | Inv.priceRelational Algebra Operators: Summary

| Operator | Symbol | Description | Example |

|---|---|---|---|

| Selection | σ | Filter rows | σ(price < 1200)(Bikes) |

| Projection | π | Select columns | π(brand, price)(Bikes) |

| Union | ⋃ | Combine, no duplicates | Avail ⋃ Sold |

| Difference | − | A minus B | Stock − Sold |

| Cartesian Product | × | All pairs | Bikes × Stores |

| Natural Join | ⨝ | Match on common attrs | Orders ⨝ Customers |

| Division | ÷ | Match all values | Students ÷ Required |

| Renaming | ρ | Rename relation/attrs | ρ(Inv)(Bikes) |

Query Optimization

Optimization Principles

- Reduce rows early

- Reduce columns early

- Delay large joins

σ → less rows

π → less columns

⨝ → less comparisons

Execution Flow

- Logical order of operations

- Goal is small intermediates

Relations

↓

Selection

↓

Projection

↓

Join

↓

Result

Pushing Selection Down

- Apply filters before joins

- Joins touch less tuples

Before:

π brand (σ price < 500 (Bicycles ⨝ Stores))

After:

π brand ((σ price < 500 (Bicycles)) ⨝ Stores)

Pushing Selection Down

Slow (filter late)

Step 1: Bicycles ⨝ Stores

→ large intermediate result

Step 2: σ price < 500

→ many rows discarded late

π brand

Fast (filter early)

Step 1: σ price < 500 (Bicycles)

→ small input

Step 2: filtered Bicycles ⨝ Stores

→ fewer comparisons

π brand

Combining Projections

- Nested projections collapse

- Remove unused columns once

Before:

π brand (π bikeId, brand (Bicycles))

After:

π brand (Bicycles)

Eliminating Unnecessary Joins

- No attributes used from table

- No predicates applied

Before:

π brand (Bicycles ⨝ Stores ⨝ Suppliers)

After:

π brand (Bicycles ⨝ Stores)

Reordering Joins

- Associative

- Commutative

- Cost changes, result does not

(Bicycles ⨝ Stores) ⨝ Suppliers

Bicycles ⨝ (Stores ⨝ Suppliers)

Join Reordering

- Orders (O): 1,000,000

- Customers (C): 100,000

- Regions (R): 10

Query:

σ R.name = 'West' (O ⨝ C ⨝ R)

Join Reordering: Bad Order

- Large intermediate result

- Filtering happens late

(O ⨝ C) ⨝ R

O ⨝ C → ~100,000,000,000 rows

Join Reordering: Better Order

- Filter small relation first

- Join reduced inputs

O ⨝ (C ⨝ (σ R='West'(R)))

R: 10 → 1

C: 100,000 → 10,000

O ⨝ C → ~1,000,000,000 rows

Selection and Projection Pushdown

- less rows

- less columns

Before:

σ price < 500 (π brand, price (Bicycles ⨝ Stores))

After:

(π brand, price (σ price < 500 (Bicycles)))

⨝ Stores

Example Query

- Flowers over $10

- Only roses

- Local suppliers

σ Price > 10 ∧ Name='Rose' ∧ Location='Local'

(Flowers ⨝ Suppliers)

Without Optimization

- Join first

- Filter later

Flowers ⨝ Suppliers

→ apply filters

1,000 × 500 = 500K

With Optimization

- Filter first

- Join reduced sets

(σ Local (Suppliers))

⨝

(σ Rose ∧ Price > 10 (Flowers))

100 × 50 = 5K

Large Join Graph

- Flowers: 1,000,000

- Suppliers: 200,000

- Supply: 5,000,000

(σ Rose ∧ Red (Flowers))

⨝ Supply

⨝ (σ Local (Suppliers))

Nested Relational Algebra

- Deeply nested joins

- Multiple selections

π Name (

σ Price > 10 (

Flowers ⨝

(σ Local (Suppliers ⨝

σ Revenue > 1000 (Companies)))

)

)

Optimized Nested Form

- Selections pushed down

- Smaller joins

π Name (

(σ Price > 10 (Flowers))

⨝

(σ Local (Suppliers ⨝

σ Revenue > 1000 (Companies)))

)

Relational Algebra vs SQL

- Logical rules

- Query equivalence

- Executable language

- Cost based optimizer

Practice Questions

- Which join order produces the smallest intermediate result?

- Where should selections be applied?

- Which projections can be pushed down?

- Why does join order affect performance?

SQLite Timing and Setup

- .timer on shows execution time

- journal_mode OFF avoids disk logging

- synchronous OFF skips fsync

- temp_store MEMORY keeps temp data in RAM

- Used only for benchmarking

- Makes slow vs fast visible

- Not for production use

journal_mode = OFF

- Disables rollback journal

- No crash recovery

- Fast writes

Write Operation

↓

[ No Journal ]

↓

Data File

synchronous = OFF

- Skips fsync

- OS buffers writes

- Lower latency

SQLite

↓ write

OS Buffer

↓ (later)

Disk

temp_store = MEMORY

- Temporary tables

- Intermediate results

- No temp disk files

Query

↓

Temp Tables

↓

RAM (Memory)

ANALYZE

- Collects table statistics

- Estimates row counts

- Improves join order

- Helps cost-based optimizer

- No data modification

- Run after bulk inserts

Data Generation (Concept)

- Generate sequences internally

- No external files

- Large but controlled datasets

- Skewed values create selectivity

- Few matches, many non-matches

- Highlights optimization impact

Data Generation (Concept)

- Internal sequence generation

- No external data files

- Deterministic and repeatable

Sequence (1…N)

↓

Value assignment

↓

Skew introduced

↓

Large tables

Set-Based INSERT (Concept)

- Single SQL statement

- Many rows inserted

- No application loop

- Set based execution

seq = [1, 2, 3, ..., 200000]

INSERT customers

FOR EACH x IN seq:

(x, 'West' or 'Other')

Data Generation (SQL Structure)

- Recursive query generates numbers

- COUNT defines table size

- Abort condition stops recursion

WITH RECURSIVE

seq(x)

↓

x starts at 1

↓

x = x + 1

↓

STOP when x reaches limit

↓

INSERT generated rows

Recursive Data Generation

-- Define a recursive sequence

WITH RECURSIVE seq(x) AS (

-- Base case: start at 1

SELECT 1

UNION ALL

-- Recursive step: increment

SELECT x + 1

FROM seq

-- Abort condition

WHERE x < 200000

)

-- Insert generated values

INSERT INTO customers

SELECT

x, -- unique id

CASE -- controlled skew

WHEN x % 100 = 0

THEN 'West'

ELSE 'Other'

END

FROM seq;

What is x in seq(x)?

xis the column name of the recursive tableseqis a temporary result set- Each row in

seqhas one value:x seq= table,x= column

WITH RECURSIVE seq(x) AS (...)

seq

----

x

----

1

2

3

...

Wall Clock Time vs CPU Time

- Wall clock = total elapsed time

- User = CPU executing query

- Sys = OS and kernel work

Time ─────────────────────────────▶

|──────────── real (wall clock) ────────────|

|──────── user (CPU work) ───────|

| sys |

Example:

real = user + sys + waiting

(what the user feels)

Understanding SQLite Timing Output

- real: wall-clock time

- user: CPU time in SQLite

- sys: OS-level overhead

Run Time:

real 0.077

user 0.075025

sys 0.000000

- ~77 ms total execution

- Mostly CPU work

- Negligible system cost

SQLite Demo

- Same query result

- Different execution time

Practice 1

SELECT COUNT(*)

FROM orders o

JOIN customers c ON o.customer_id = c.customer_id

WHERE c.region = 'West';

- How would you make this faster?

- Which table should be filtered first?

σ region='West' (Orders ⨝ Customers)

Practice 2

SELECT *

FROM sales s

JOIN products p ON s.product_id = p.id

WHERE p.category = 'Bike';

- Where should selection be applied?

σ category='Bike' (Sales ⨝ Products)

Practice 3

SELECT o.id

FROM orders o

JOIN customers c ON o.customer_id = c.customer_id

JOIN regions r ON c.region_id = r.id

WHERE r.name = 'West';

- Which join should happen first?

Orders ⨝ (Customers ⨝ σ name='West' (Regions))

Practice 4

SELECT *

FROM enrollments e

JOIN students s ON e.student_id = s.id

WHERE s.major = 'CS';

- How to reduce intermediate size?

Enrollments ⨝ σ major='CS' (Students)

Practice 5

SELECT *

FROM supply sp

JOIN suppliers s ON sp.supplier_id = s.id

JOIN flowers f ON sp.flower_id = f.id

WHERE s.location = 'Local';

- Which table is most selective?

Supply ⨝ (σ location='Local' (Suppliers) ⨝ Flowers)

Practice 6

SELECT *

FROM logs l

JOIN users u ON l.user_id = u.id

WHERE u.active = 1;

- Which relation should be reduced first?

Logs ⨝ σ active=1 (Users)

Practice 7

SELECT *

FROM orders o

JOIN items i ON o.id = i.order_id

WHERE o.date > '2025-01-01';

- What happens if we filter orders first?

σ date>'2025-01-01' (Orders) ⨝ Items

Practice 8

SELECT *

FROM activity a

JOIN conditions c ON a.id = c.activity_id

WHERE c.type = 'once';

- Where is the selective predicate?

Activity ⨝ σ type='once' (Conditions)

Practice 9

SELECT *

FROM payments p

JOIN users u ON p.user_id = u.id

JOIN countries c ON u.country_id = c.id

WHERE c.code = 'US';

- Which join should be last?

Payments ⨝ (Users ⨝ σ code='US' (Countries))

Practice 10

SELECT *

FROM reviews r

JOIN products p ON r.product_id = p.id

WHERE p.rating > 4;

- How does early filtering help?

Reviews ⨝ σ rating>4 (Products)

Answer 1

SELECT COUNT(*)

FROM (

SELECT customer_id

FROM customers

WHERE region = 'West'

) c

JOIN orders o ON o.customer_id = c.customer_id;

-- Before

σ region='West' (Orders ⨝ Customers)

-- After

Orders ⨝ (π customer_id (σ region='West' (Customers)))

Answer 2

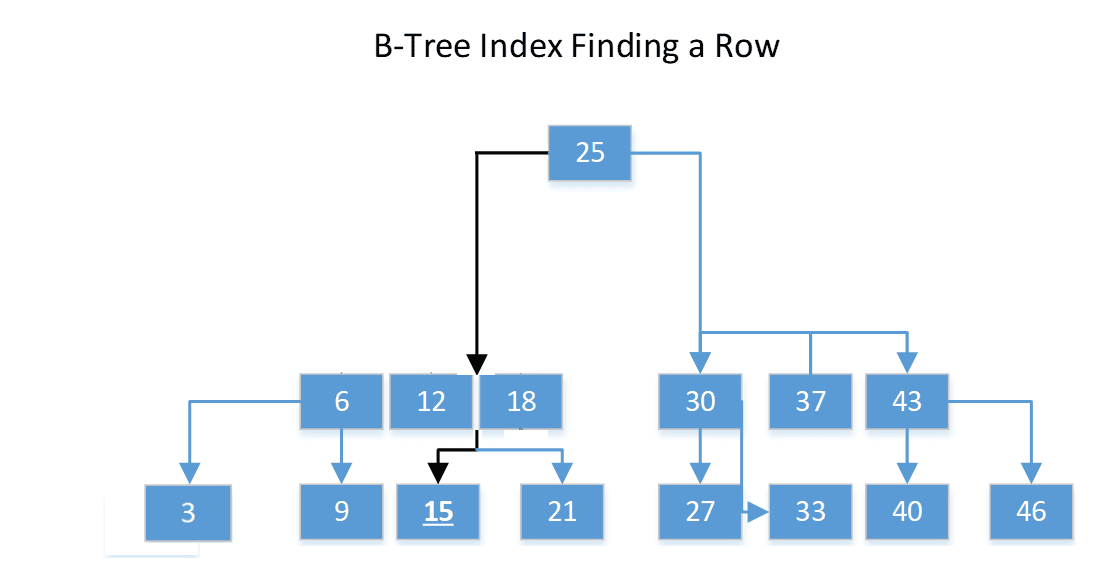

SELECT s.sale_id, s.product_id, s.qty

FROM (

SELECT id

FROM products

WHERE category = 'Bike'

) p

JOIN sales s ON s.product_id = p.id;

-- Before

σ category='Bike' (Sales ⨝ Products)

-- After

Sales ⨝ (π id (σ category='Bike' (Products)))

Answer 3

SELECT o.id

FROM (

SELECT id

FROM regions

WHERE name = 'West'

) r

JOIN customers c ON c.region_id = r.id

JOIN orders o ON o.customer_id = c.customer_id;

-- Before

π o.id (σ name='West' (Orders ⨝ Customers ⨝ Regions))

-- After

π o.id (

Orders ⨝

(Customers ⨝ (π id (σ name='West' (Regions))))

)

Answer 4

SELECT e.student_id, e.course_id

FROM enrollments e

JOIN (

SELECT id

FROM students

WHERE major = 'CS'

) s ON e.student_id = s.id;

-- Before

σ major='CS' (Enrollments ⨝ Students)

-- After

Enrollments ⨝ (π id (σ major='CS' (Students)))

Answer 5

SELECT sp.supplier_id, sp.flower_id

FROM (

SELECT id

FROM suppliers

WHERE location = 'Local'

) s

JOIN supply sp ON sp.supplier_id = s.id

JOIN flowers f ON sp.flower_id = f.id;

-- Before

σ location='Local' (Supply ⨝ Suppliers ⨝ Flowers)

-- After

((Supply ⨝ (π id (σ location='Local' (Suppliers)))) ⨝ Flowers)

Answer 6

SELECT l.log_id, l.user_id, l.action

FROM logs l

JOIN (

SELECT id

FROM users

WHERE active = 1

) u ON l.user_id = u.id;

-- Before

σ active=1 (Logs ⨝ Users)

-- After

Logs ⨝ (π id (σ active=1 (Users)))

Answer 7

SELECT o.id, i.item_id, i.qty

FROM (

SELECT id

FROM orders

WHERE date > '2025-01-01'

) o

JOIN items i ON o.id = i.order_id;

-- Before

σ date>'2025-01-01' (Orders ⨝ Items)

-- After

((π id (σ date>'2025-01-01' (Orders))) ⨝ Items)

Answer 8

SELECT a.id, a.title, c.type

FROM activity a

JOIN (

SELECT activity_id, type

FROM conditions

WHERE type = 'once'

) c ON a.id = c.activity_id;

-- Before

σ type='once' (Activity ⨝ Conditions)

-- After

Activity ⨝ (π activity_id, type (σ type='once' (Conditions)))

Answer 9

SELECT p.payment_id, p.amount

FROM (

SELECT id

FROM countries

WHERE code = 'US'

) c

JOIN users u ON u.country_id = c.id

JOIN payments p ON p.user_id = u.id;

-- Before

π payment_id, amount (σ code='US' (Payments ⨝ Users ⨝ Countries))

-- After

π payment_id, amount (

Payments ⨝

(Users ⨝ (π id (σ code='US' (Countries))))

)

Answer 10

SELECT r.review_id, r.product_id, r.stars

FROM reviews r

JOIN (

SELECT id

FROM products

WHERE rating > 4

) p ON r.product_id = p.id;

-- Before

σ rating>4 (Reviews ⨝ Products)

-- After

Reviews ⨝ (π id (σ rating>4 (Products)))

Module 4

Relational Database Interfaces

┌──────────────────────┐

│ Admin Interface │

│ (CLI · GUI · Tools) │

└──────────┬───────────┘

│

│

┌──────────────────────┐ │ ┌──────────────────────┐

│ Application Code │────┼────│ Data Tools / ETL │

│ (Web · Mobile · API) │ │ │ (Import · Export) │

└──────────┬───────────┘ │ └──────────┬───────────┘

│ │ │

│ ▼ │

│ ┌────────────────┐ │

└───────▶ DBMS ◀─────┘

│ (SQLite / PG) │

│ parse · exec │

│ enforce rules │

└────────────────┘

▲

│

┌──────────┴───────────┐

│ Reporting / BI │

│ (Queries · Views) │

└──────────────────────┘

Today

- Practice Questions

- Relational Database Interfaces:

- Admin Interface

- Command-Line Interface (CLI)

- Application Programming Interface (API)

- Application User Interface (UI)

- PostgreSQL

- Homework 2, Lab 2

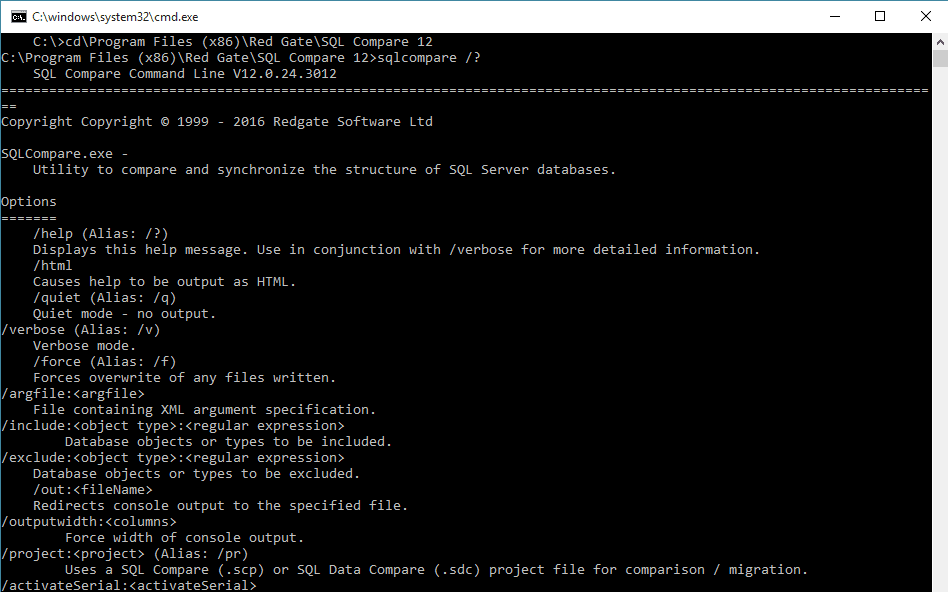

1970s: The Relational Model Becomes Real

- Relational model introduced

- Research prototypes

- Early query languages

- Interfaces are terminals

Early Client–Database: Terminal Direct (Before GUI)

- Users worked directly in a terminal

- No graphical tools: only text commands and scripts

- SQL typed manually and executed immediately

- Automation came from shell scripts and batch jobs

- Fast and precise, but less friendly for non technical users

┌──────────────────────┐

│ User │

│ (DBA · Developer) │

└──────────┬───────────┘

│

▼

┌──────────────────────┐

│ Terminal │

│ (CLI · Shell) │

│ SQL · scripts │

└──────────┬───────────┘

│

│ SQL / Batch Jobs

▼

┌──────────────────────┐

│ Database │

│ (Tables · Rules) │

│ constraints · ids │

└──────────────────────┘

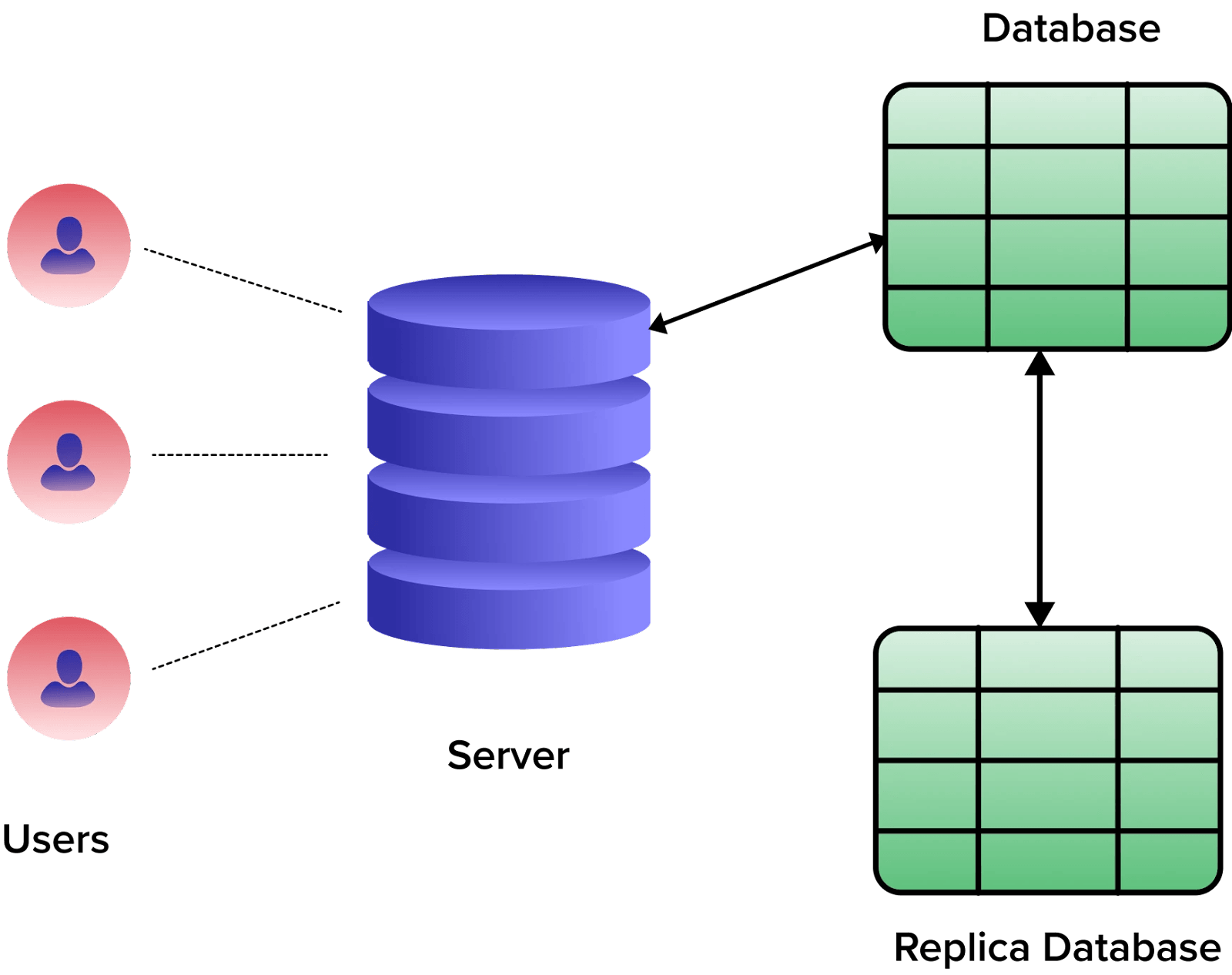

1980s: Commercial RDBMS and Admin Tools